Sudo su (@sudoingX)

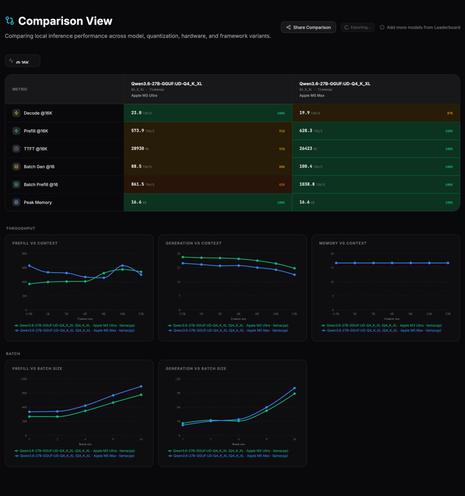

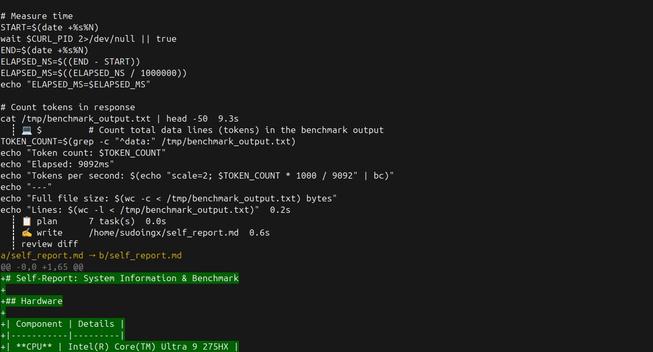

27B 로컬 모델이 자신의 벤치마크 보고서를 직접 작성하는 사례를 소개한다. Carnice-v2 27B가 하드웨어, 모델 파일, llama.cpp 커밋을 찾아 자기 평가를 수행하는 등 로컬 에이전트형 AI의 가능성을 보여준다.

Sudo su (@sudoingX) on X

watching a 27b local model write its own benchmark report just now and i'm sitting with this for a sec. gave carnice-v2 27b (kaios SFT on qwen 3.6 dense, trained on hermes agent traces) a self-report card task, find your hardware, find your model file, find the llama.cpp commit