Artificial Analysis (@ArtificialAnlys)

Artificial Analysis에서 Gemma 4, Qwen3.5 등 여러 AI 모델을 비교할 수 있는 모델 비교 페이지를 소개했다. 최신 오픈 모델들의 성능을 한곳에서 확인하고 벤치마크 비교에 활용할 수 있는 유용한 리소스다.

Artificial Analysis (@ArtificialAnlys)

Artificial Analysis에서 Gemma 4, Qwen3.5 등 여러 AI 모델을 비교할 수 있는 모델 비교 페이지를 소개했다. 최신 오픈 모델들의 성능을 한곳에서 확인하고 벤치마크 비교에 활용할 수 있는 유용한 리소스다.

New week, new update for the slides of my talk "Run LLMs Locally":

Now including Gemma4 and Qwen3-Omni with Vision and Audio support and new slides describing Llama.cpp server parameters.

https://codeberg.org/thbley/talks/raw/branch/main/Run_LLMs_Locally_2026_ThomasBley.pdf

#ai #llm #llamacpp #stablediffusion #gptoss #qwen3 #glm #localai #gemma4

Omar Khattab (@lateinteraction)

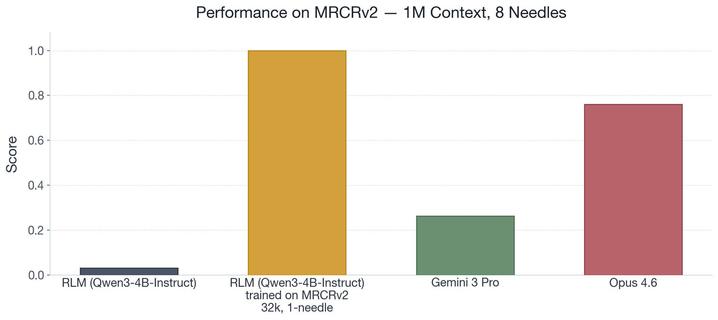

@a1zhang의 새 블로그가 언어 모델의 미래를 다루며, RLM-Qwen3-4B에 대해 32k 토큰의 쉬운 장문맥 과제로 GRPO를 학습해도 1M 토큰, 8-needle 장문맥 작업으로 자동 일반화되고 100% 신뢰도로 동작한다는 결과가 핵심으로 소개됐다.

New must-read blog by @a1zhang on the future of language models. Buried nugget: doing GRPO for RLM-Qwen3-4B on short (32k token) and easy (single-needle) MRCRv2 long-context tasks generalizes *automatically* and with perfect (100%) reliability to 1M-token, 8-needle tasks!!

William Ruider (@ruider92545)

EXO Labs 1.0.69이 2022년형 6노드 Mac M1 Studio Max 클러스터에서 Thunderbolt 4와 MLX ring만으로 대형 모델 Qwen3.5-122B-A10B(8-bit/FP8, 131GB)를 구동하는 놀라운 성능을 보여줬다는 내용이다. 로컬 분산 추론/실행 성능의 큰 진전을 암시한다.

Guys and Girls, look >>> what EXO Labs 1.0.69 did to my 6-node Mac M1 Studio Max cluster (from 2022) over Thunderbolt 4 without RDMA, MLX ring. Just downloaded Qwen3.5-122B-A10B (8-bit/FP8) MLX 131 GB heavy. Thinking mode I have a …. WHAT!!!! No way!!!! Am I dreaming ???!

New update for the slides of my talk "Run LLMs Locally": Bonsai-8B

The latest version of Llama.cpp now supports Vulkan with 1-bit quantized models like Bonsai: 8B model having 1.1 GB in size, 2.5 GB in RAM.

https://codeberg.org/thbley/talks/raw/branch/main/Run_LLMs_Locally_2026_ThomasBley.pdf

#ai #llm #llamacpp #stablediffusion #gptoss #qwen3 #glm #localai

金のニワトリ (@gosrum)

Gemma-4를 thinking 없이 실행해 본 결과 성능이 떨어졌고, Qwen3.5와 달리 ts-bench 점수가 낮아졌다고 합니다. 성능을 중시한다면 Gemma-4는 기본값인 thinking 모드로 사용하는 것이 좋다는 실전 평가입니다.