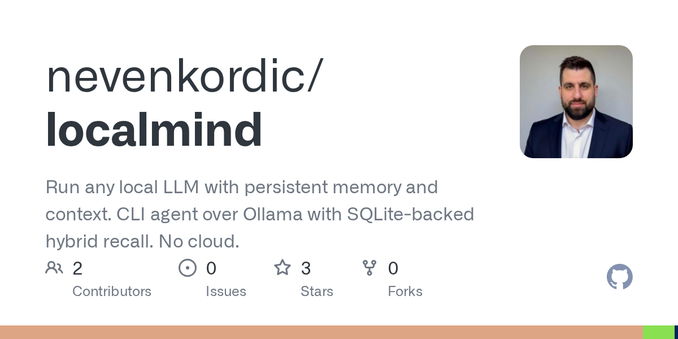

So I built LocalMind: a single Rust binary that gives any Ollama model persistent memory. One SQLite file, hybrid recall every turn, small-model defaults (1.9 GB).

No cloud. MIT.

https://github.com/nevenkordic/localmind

#LocalFirst #LLM #Ollama #Rust #OpenSource

GitHub - nevenkordic/localmind: Run any local LLM with persistent memory and context. CLI agent over Ollama with SQLite-backed hybrid recall. No cloud.

Run any local LLM with persistent memory and context. CLI agent over Ollama with SQLite-backed hybrid recall. No cloud. - nevenkordic/localmind