“The present is pregnant with the future”*…

The estimable Tim O’Reilly uses scenario planning to create an insightful look at AI, our futures, and the choices that will define them…

We all read it in the daily news. The New York Times reports that economists who once dismissed the AI job threat are now taking it seriously. In February, Jack Dorsey cut 40% of Block’s workforce, telling shareholders that “intelligence tools have changed what it means to build and run a company.” Block’s stock rose 20%. Salesforce has shed thousands of customer support workers, saying AI was already doing half the work. And a Stanford study found that software developers aged 22 to 25 saw employment drop nearly 20% from its peak, while developers over 26 were doing fine.

But how are we to square this news with a Vanguard study that found that the 100 occupations most exposed to AI were actually outperforming the rest of the labor market in both job growth and wages, and a rigorous NBER study of 25,000 Danish workers that found zero measurable effect of AI on earnings or hours?

Other studies could contribute to either side of the argument. For example, PwC’s 2025 Global AI Jobs Barometer, analyzing close to a billion job ads across six continents, found that workers with AI skills earn a 56% wage premium, and that productivity growth has nearly quadrupled in the industries most exposed to AI.

This is exactly the kind of contradictory, uncertain landscape that scenario planning was designed for. Scenario planning doesn’t ask you to predict what the future will be. It asks you to imagine divergent possible futures and to develop a strategy that improves your odds of success across all of them. I’ve used it many times at O’Reilly and have written about it before with COVID and climate change as illustrative examples. The argument between those who say AI will cause mass unemployment and those who insist technology always creates more jobs than it destroys is a debate that will only be resolved by time. Both sides have evidence. Both are probably right at some level. And both framings are not terribly helpful for anyone trying to figure out what to do next…

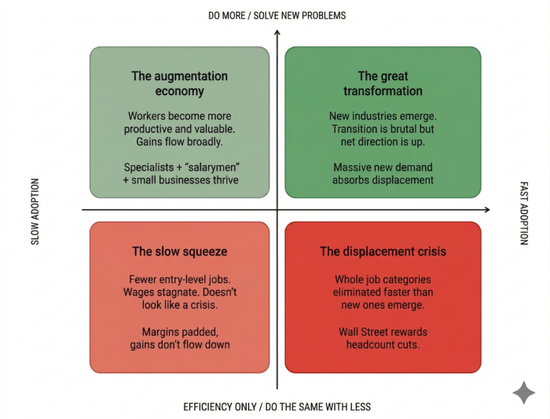

[O’Reilly explains the scenario approach, then applies it to our future with AI (see the image above), astutely assessing the conflicting signals that we’ve experiencing; he explores the “robust strategy” for our uncertian future (strategic choices that make sense regardless of which future unfolds); then he concludes…

… I’ll return to the theme that I sounded in my book WTF? What’s the Future and Why It’s Up To Us.

Every time a company uses AI to do what it was already doing with fewer people, it is making a choice for the lower half of the scenario grid. Every time a company uses AI to do something that wasn’t previously possible, to serve a customer who wasn’t previously served, to solve a problem that wasn’t previously solvable, it is making a choice for the upper half. These choices compound, for good or ill. An economy that uses AI primarily for efficiency will slowly hollow itself out.

Looking at the news from the future, both sets of signals are present. The question is which will dominate. AI will give us both the Augmentation Economy and the Displacement Crisis, in different measures in different places, depending on the choices we make.

Scenario planning teaches us that we don’t have to predict which future we’ll get. We do have to prepare for a very uncertain future. But the robust strategy, the one that works across every quadrant, is to focus on doing more, not just doing the same with less, and to find ways that human taste still matters in what is created. As long as there is unmet demand, as long as there are problems we haven’t solved and people we haven’t served, AI will augment human work rather than replacing it. It’s only when we stop looking for new things to do that the machines come for the jobs…

Eminently worth reading in full. Indeed, speaking as a long-time scenario planner, your correspondent can only wish that everyone who wields “scenarios” applies the approach as appropriately, adriotly, and acutely as Tim has: “Scenario Planning for AI and the ‘Jobless Future‘,” from @timoreilly.bsky.social.

* Voltaire

###

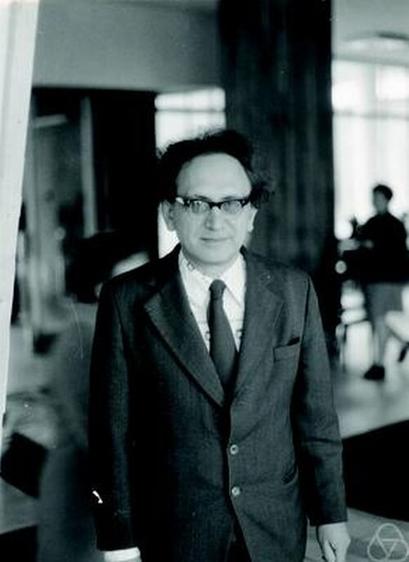

As we take the long view, we might send formative birthday greetings to Mark Pinsker; he was born on this date in 1923. A mathematician, he made impoprtant contributions to the fields of information theory, probability theory, coding theory, ergodic theory, mathematical statistics, and communication networks. This work, which helped lay the foundation for AI-as-we-know-it, earned him the IEEE Claude E. Shannon Award in 1978, and the IEEE Richard W. Hamming Medal in 1996, among other honors.

source

#AI #artificialIntelligence #business #culture #economics #employment #future #history #informationTheory #jobs #MarkPinsker #Mathematics #politics #scenarioPlanning #scenarios #Science #society #Technology #TimOReilly