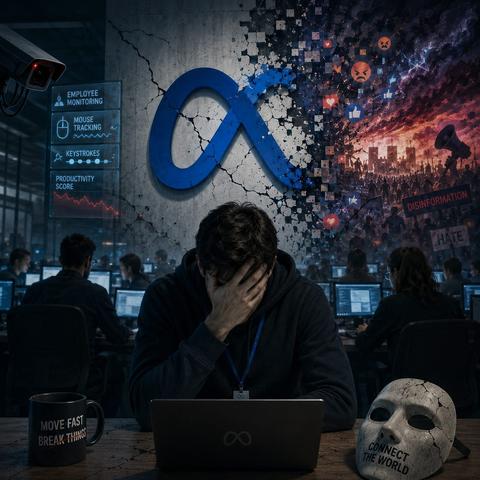

"This may not be the Nuremberg trial, but we all know that the excuse of “following orders” is not an alibi when you know what you are doing. And everybody at Meta knew what they were doing. They knew they were designing systems to maximize engagement that polarized society. They knew this when they turned privacy into an exploitable variable. They knew it when the evidence mounted up on the harm Instagram was causing. They knew this when the platform became an infrastructure of propaganda, hatred and manipulation. And they knew this because many of these damages were documented, denounced and discussed inside and outside the company.

And now the same machinery is beginning to be applied inwards. Meta employees who for years helped surveil, profile, and exploit billions of users now discover that they too can be monitored, measured and turned into training data. As The New York Times notes with more than a hint of irony, Meta’s embrace of AI is making its employees miserable”.

Let’s be clear, Meta’s workforce have not had a Damascene moment: It’s something more human and more uncomfortable: the belated realization that the system they helped build had no limits, it just hadn’t come for them yet."

https://edans.medium.com/e7bb510a9127

#Meta #SocialMedia #Surveillance #Capitalism #Privacy #DataProtection