https://reiner.org/neural-net-ciphers #neuralnetworks #cryptography #algorithms #techhumor #absurdity #HackerNews #ngated

https://reiner.org/neural-net-ciphers #neuralnetworks #cryptography #algorithms #techhumor #absurdity #HackerNews #ngated

Why are neural networks and cryptographic ciphers so similar? (2025)

https://reiner.org/neural-net-ciphers

#HackerNews #neuralnetworks #cryptography #AI #similarities #technology

https://github.com/earthtojake/text-to-cad #AI #OpenSource #Engineering #NeuralNetworks #Innovation #HackerNews #ngated

An excellent introduction to #quantization used for #LLMs 👌🏽:

“Quantization From The Ground Up”, Sam Rose, Ngrok (https://ngrok.com/blog/quantization).

On HN: https://news.ycombinator.com/item?id=47519295

#AI #Math #FloatingPoint #NumericalAnalysis #Numbers #NeuralNetworks #Precision #Accuracy

Python Trending (@pythontrending)

AI 기반 캐릭터 애니메이션을 위한 파이썬 프레임워크 ai4animationpy가 소개됐다. 신경망을 활용해 캐릭터 동작을 생성·제어하는 도구로, 애니메이션 제작과 AI 크리에이티브 워크플로에 활용될 수 있다.

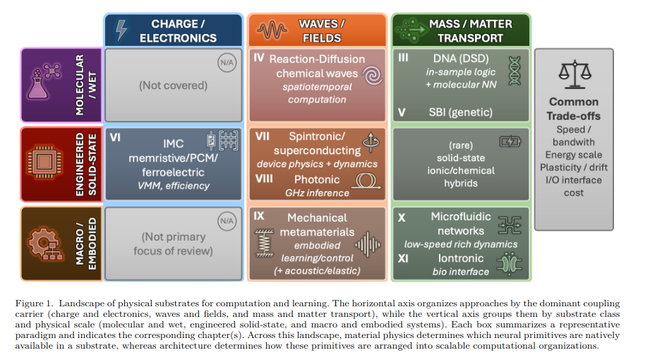

Beyond Silicon: Materials, Mechanisms, and Methods for Physical Neural Computing https://arxiv.org/abs/2604.09833

My current #DotNetMAUI and #NeuralNetworks project: Design and train neural networks in #GoogleColab and transfer them to a cross-platform app using #ONNX. Follow my progress here:

Creating a Neural Network Based Mobile App with a Neural Network Designed and Trained in Google Colab | Stephen Moreton-Howell

Having done a masters degree in Artificial Intelligence and then switch my attention to writing cross-platform mobile apps, I want to drag it back to AI, while continuing to work on .NET MAUI. So I'm doing some experimental work creating and training neural networks in Python/Keras on Google Colab and then exporting them into .onnx files so they can be deployed into the .NET MAUI apps. Seeing what's possible before deciding what interesting NN based apps I might want to make.

Kimon Fountoulakis (@kfountou)

LLM에서의 추론 수학을 다룬 강연 영상이 소개되었으며, 신경망이 덧셈·곱셈과 알고리즘 지시를 정확히 수행하는 방법을 다루는 내용이다.

Kimon Fountoulakis (@kfountou) on X

Wow, what an honour @CsabaSzepesvari, thanks! "Math of Reasoning in LLMs, Session 11: Learning to Add, Multiply, and Execute Algorithmic Instructions Exactly with Neural Networks" https://t.co/lkQ0PrXRLK I watched all of it, and I really enjoyed it.

https://www.brunogavranovic.com/posts/2026-04-20-types-and-neural-networks.html #neuralnetworks #codeJenga #HackerNews #ngated