https://eyeball.rory.codes/ #technology #clicking #innovation #Rory #HackerNews #ngated

https://eyeball.rory.codes/ #technology #clicking #innovation #Rory #HackerNews #ngated

🔥 TRENDING

📢 Harnessing the Gut with Biohacking: Precision Health Meets Therapeutic Innovation, Frontiers Reports

#Harnessing #Biohacking #Precision #Health #GlobalFeed #News #EN

*Automatically posted by Global Feed Bot*

🔥 TRENDING

📢 Unlocking Precision Health: Biohacking the Gut Microbiome Drives Therapeutic Innovation

#Unlocking #Precision #Health #Biohacking #GlobalFeed #News #EN

*Automatically posted by Global Feed Bot*

🔥 TRENDING

📢 Precision Health Revolution: Biohacking the Gut Microbiome for Cutting-Edge Therapies—Frontiers Research Revealed

#Precision #Health #Revolution #Biohacking #GlobalFeed #News #EN

*Automatically posted by Global Feed Bot*

https://api-docs.deepseek.com/quick_start/pricing #V4Pro #Discount #SandSale #TokenBilling #Precision #HackerNews #ngated

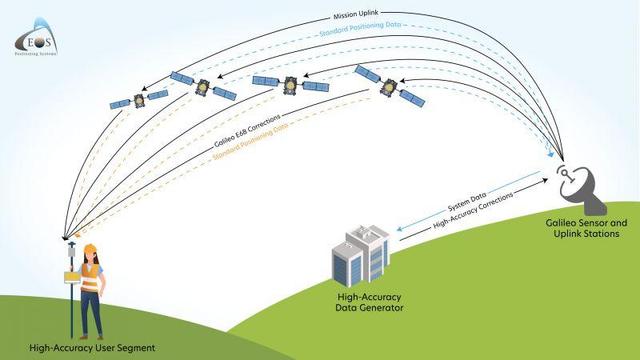

https://atlas.whatip.xyz/post.php?slug=100-bn-satellite-navigation-system-market-2035-by-type-of-orbit-solution-constellation-application-enterprise-and-geographical-regions

<p>Growing demand for satellite navigation in autonomous tech

#constellation #navigation #satellite #precision

$100+ Bn Satellite Navigation System Market, 2035 by Type of Orbit, Solution, Constellation, Application, Enterprise, and Geographical Regions

Growing demand for satellite navigation in autonomous tech, precision ag, aviation, and logistics presents key opportunities. Technological advancements in GNSS, driven by high-precision needs and mul...

Hmm, which one can you trust? 🥺

Day 100/100 – precision

This entry is part 103 of 104 in the series 100 Days of Training (2017)one. hundred. days. (with one miss.) Worst part? …the photos. Shout out to Miguel on this one for catching me as I stuck a sequence of strides across the wall to a precision (on a wall, that’s not a flat-top box.)

ɕ

#100DaysOfTraining2017 #ArtDuDéplacement #Instaspam #PrecisionВаша модель показывает 95% accuracy и при этом бесполезна: метрики для несбалансированных классов

Модель может показывать 95–99% accuracy и при этом не решать задачу: особенно если редкий класс важнее всего для бизнеса. В статье разбираем, почему accuracy ломается на несбалансированных данных, как читать precision, recall и F1, зачем смотреть PR‑кривую и confusion matrix, а также как подбирать порог классификации с учетом стоимости ошибок. Понять ошибки

https://habr.com/ru/companies/otus/articles/1034692/

#accuracy #precision #recall #F1score #несбалансированные_классы #метрики_классификации #confusion_matrix #PRкривая #порог_классификации #SMOTE