How AI Benchmarks Work – and When Scores Mislead

이 기사는 AI 벤치마크가 어떻게 작동하는지, 그리고 벤치마크 점수가 왜 때때로 오해를 불러일으키는지 설명한다. 벤치마크 점수는 모델 성능을 평가하는 중요한 지표지만, 데이터 중복(오염), 점수 포화, 그리고 점수 조작(게임화) 문제로 인해 실제 성능과 차이가 발생할 수 있다. 신뢰할 수 있는 점수를 얻기 위해서는 테스트 환경의 엄격한 통제와 검증이 필수적임을 강조한다. 또한, 벤치마크의 한계와 이를 극복하기 위한 방법들을 구체적으로 제시한다.

left curve dev (@leftcurvedev_)

설정에서 주력 모델을 Qwen3.6 27B로 변경했고, 추론(thinking)을 끄는 실험을 진행 중이라고 언급했다. Qwen 계열은 사고 과정을 비활성화하면 성능이 크게 개선될 수 있다는 점을 시사하는 실사용 관찰이다.

left curve dev (@leftcurvedev_) on X

Main model in the setup is Qwen3.6 27B now (switched from the 35B A3B) and i'm also trying something: disabling thinking. @bnjmn_marie convinced me to give it a try, you can't ignore the numbers. Qwen models like to think, so it's big bump when you disable it. So far it's going

Dan McAteer (@daniel_mac8)

Claude Mythos가 매우 강력하다는 평가와 함께, @AISecurityInst의 Network Attack 시나리오를 32단계 만에 완료했다는 사례가 공유되었습니다. 사람이 하면 약 20시간 걸릴 작업을 빠르게 처리해, 모델의 보안/공격 시뮬레이션 능력이 주목받고 있습니다.

Ivan Fioravanti ᯅ (@ivanfioravanti)

RepoPrompt Bench가 매우 복잡하다는 관측으로, 공개(Open) 환경에서 이를 안정적으로 처리할 수 있는 모델로 Kimi와 GLM만 언급되고 있습니다. 벤치마크 난이도와 특정 LLM 계열의 성능 한계·강점을 시사하는 짧은 평가성 트윗입니다.

#YonhapInfomax #MetaPlatforms #ArtificialIntelligence #MarkZuckerberg #GoogleGemini #ModelPerformance #Economics #FinancialMarkets #Banking #Securities #Bonds #StockMarket

https://en.infomaxai.com/news/articleView.html?idxno=109761

Meta Delays New AI Model Launch Due to Performance Shortfall Against Rivals

Meta Platforms postpones launch of new AI model codenamed 'Avocado' to at least May after it failed to match performance of rivals Google, OpenAI, and Anthropic in key areas including reasoning, coding, and writing capabilities, despite CEO Mark Zuckerberg's billions in AI investments.

Rohan Paul (@rohanpaul_ai)

Ant Open Source가 LLaDA2.1 Flash를 공개했습니다. 100B 파라미터 규모의 언어 diffusion MoE(혼합 전문가) 모델로, 최대 892 토큰/초의 추론 속도를 기록해 Qwen3-30B-A3B보다 2.5배 빠른 성능을 냈다고 보고되었습니다. 높은 실시간 추론 성능을 강조한 릴리스입니다.

https://x.com/rohanpaul_ai/status/2021643743313756658

#llm #inferencespeed #mixtureofexperts #antopensource #modelperformance

Rohan Paul (@rohanpaul_ai) on X

Ant Open Source just dropped LLaDA2.1 Flash. Insane inference speed for a 100B param language diffusion MoE model. Achieved a peak speed of 892 tokens per second beating the much smaller Qwen3-30B-A3B by 2.5x. The reason it could achieve this incredible speed is because it

StepFun (@StepFun_ai)

Step 3.5 Flash 모델이 MathArena에서 1위를 차지했으며 전체 점수 96.11%, AIME 2026 I에서 97% 성능을 기록했습니다. 런당 비용은 $0.40로, 11B 액티브 파라미터 규모의 모델이 높은 성능과 저비용을 동시에 보여준 사례입니다.

Tibo (@thsottiaux)

작성자는 최신 조합으로 코딩 성능에서 SoTA를 달성했고, 토큰 효율성(token-efficiency)과 추론 최적화(inference optimizations)를 결합해 지난주 버전보다 빠르다고 주장합니다. 고·극고(reasoning effort) 환경에서 GPT-5.3-Codex가 GPT-5.2-Codex보다 약 60~70% 더 빠르다고 명시합니다.

Tibo (@thsottiaux) on X

First time we combine SoTA on coding performance AND it is objectively the fastest thanks to combination of token-efficiency and inference optimizations. At high and xhigh reasoning effort, the two combine to make GPT-5.3-Codex ~60-70% faster than GPT-5.2-Codex from last week.

Chased/acc (@ChaseWang)

Qwen3 30B 모델이 가정 환경에서도 구동되어 초당 약 20 token 처리 속도를 낸다고 보고되었으며, 이 성능은 @exolabs 덕분이라고 언급하고 있습니다.

--

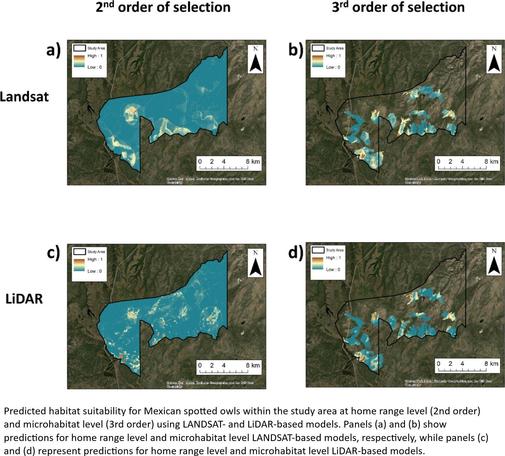

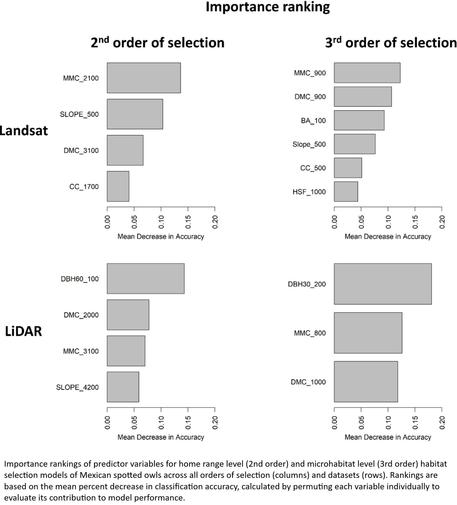

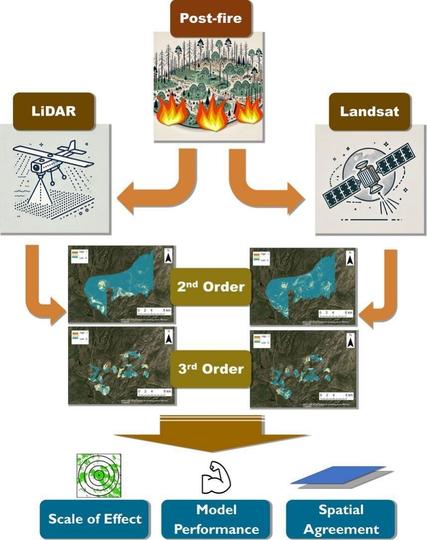

https://doi.org/10.1016/j.ecoinf.2025.103168 <-- shared paper

--

#GIS #spatial #mapping #Habitat #suitability #Megafire #Scaling #Habitatsuitability #selection #model #modeling #framework #Strixoccidentalis #Wildfire #bushfire #remotesening #LIDAR #elevation #ecosystem #LANDSAT #comparasion #mexican #spottedowl #avian #postfire #landscape #vegetation #ecosystem #damage #risk #hazard #earthobservation #scaling #scale #multifactor #homerange #microhabitat #ecology #recovery #spatialanalysis #spatiotemporal #modelperformance #reliability #accuracy #precision #forest #integration #conservation #landscape #fireaffected #spatialdisagreement