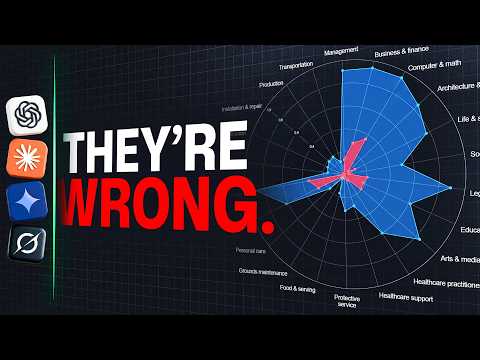

Math Behind "AI Will Replace Engineers" Is Embarrassingly Wrong

이 영상은 'AI가 엔지니어를 대체할 것이다'라는 주장에 대한 수학적 근거가 잘못되었음을 상세히 설명한다. 신경망과 트랜스포머의 작동 원리, AI 성장의 한계, 하드웨어 및 인프라 병목 현상, 에너지 및 비용 문제 등을 다루며 AI가 일자리를 완전히 대체하기 어려운 현실적인 이유를 분석한다. 또한 AI 도입의 실제 속도와 책임 문제, 신뢰성 한계 등도 함께 논의하여 AI 영향에 대한 현실적인 타임라인을 제시한다.