How AI Benchmarks Work – and When Scores Mislead

이 기사는 AI 벤치마크가 어떻게 작동하는지, 그리고 벤치마크 점수가 왜 때때로 오해를 불러일으키는지 설명한다. 벤치마크 점수는 모델 성능을 평가하는 중요한 지표지만, 데이터 중복(오염), 점수 포화, 그리고 점수 조작(게임화) 문제로 인해 실제 성능과 차이가 발생할 수 있다. 신뢰할 수 있는 점수를 얻기 위해서는 테스트 환경의 엄격한 통제와 검증이 필수적임을 강조한다. 또한, 벤치마크의 한계와 이를 극복하기 위한 방법들을 구체적으로 제시한다.

fly51fly (@fly51fly)

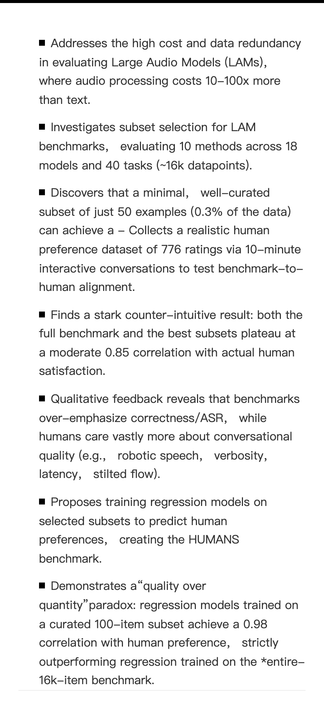

인간 선호 정렬을 활용해 LAM 평가를 더 효율적으로 수행하는 새 연구를 소개합니다. 인간을 우선에 두는 평가 방식으로, 인간 피드백과 정렬을 결합한 모델 평가 접근을 제안합니다.

Voxelbench (@voxelbench)

OpenAI API 크레딧 지원을 받아 voxelbench.ai에서 모델 빌드 결과를 공개했다. 특정 모델의 구축 과정과 결과를 확인할 수 있는 벤치/데모 성격의 사이트로 보이며, AI 모델 실험과 평가를 공유하는 사례다.

swyx (@swyx)

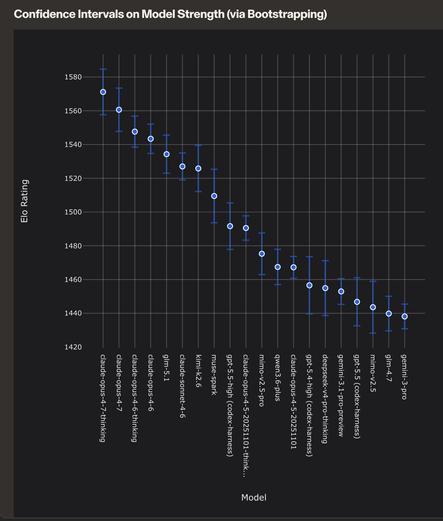

Opus 4.7이 4.6보다 성능이 퇴보했다는 의견이 많지만, 작성자는 오프라인/온라인 평가 결과를 보면 전반적으로는 명확한 개선으로 보인다고 언급합니다. 다만 평가에 반영되지 않는 ‘성격(personality)’ 같은 요소가 차이를 만드는지 의문을 제기합니다.

Bindu Reddy (@bindureddy)

DeepSeek V4 Pro를 제대로 실행하는 방법을 찾았고, 평가 결과가 매우 인상적이라고 언급했다. 특히 Opus 4.7 Medium보다도 더 좋다고 평가하며, 추가 내용도 예고했다.

ben (@contraben)

Human Creativity Benchmark를 소개한다. 이 평가는 창의적 전문가들이 평가하듯 AI 모델의 창의성을 점수화하는 첫 번째 벤치마크로, 모델의 창의적 성능을 새로운 방식으로 측정하려는 시도다.

SelfReflect measures whether an LLM's text summary of its uncertainty matches its actual answer distribution. Across 20 modern models: it doesn't, unless the model sees samples of its own answers first.

The negative result does more work than the metric itself. Fits a growing line where LLM self-reports shouldn't be trusted as introspection. Practical workaround isn't cheap: N forward passes to sample, then a summarize pass.

SelfReflect: Can LLMs Communicate Their Internal Answer Distribution? – synesis

An Apple paper introduces an information-theoretic metric for whether an LLM’s text summary of its own uncertainty matches the distribution it actually samples from, and finds that current models cannot do this without sampling help.

KGLens turns a knowledge graph into test questions and uses Thompson sampling to zero in on a model's weakest facts.

The interesting bit is the output shape: a per-relation map of where the model is and isn't reliable, against a graph matched to your deployment. Sampling trick should generalize to red-teaming, jailbreak coverage, capability probing too.

KGLens: Towards Efficient and Effective Knowledge Probing of Large Language Models with Knowledge Graphs – synesis

An Apple paper turns curated knowledge graphs — structured fact databases — into a smart probe that locates a language model’s factual blind spots in far fewer questions than checking every fact one by one.

GPT 5.5 is the second model who beats AISI's cyber range. After Mythos Preview beaten the same challenge before several weeks ago.

Full report and evaluation:

https://www.aisi.gov.uk/blog/our-evaluation-of-openais-gpt-5-5-cyber-capabilities

#cybersecurity

#security

#infosec

#ai

#artificialIntelligence

#mythos

#evaluation

#claude

#gpt

#openai

#memes