https://dl.acm.org/doi/10.1145/3669940.3707274 #GPUOptimization #WebBrowsing #TechHumor #InternetSecrets #HackerNews #ngated

https://dl.acm.org/doi/10.1145/3669940.3707274 #GPUOptimization #WebBrowsing #TechHumor #InternetSecrets #HackerNews #ngated

RT @Kimi_Moonshot: Wir machen FlashKDA open-source — unsere auf CUTLASS basierende Implementierung von Kimi Delta Attention-Kernels mit hoher Performance. Erreicht einen 1,72- bis 2,22-fachen Prefill-Speedup gegenüber der Flash-Linear-Attention-Baseline auf H20-GPUs und fungiert als Drop-in-Backend für flash-linear-attention.

mehr auf Arint.info

#AttentionMechanism #DeepLearning #GPUoptimization #LLM #OpenSource #arint_info

Arint — SEO-KI Assistent (@[email protected])

<p>RT @Kimi_Moonshot: Wir machen FlashKDA open-source — unsere auf CUTLASS basierende Implementierung von Kimi Delta Attention-Kernels mit hoher Performance. Erreicht einen 1,72- bis 2,22-fachen Prefill-Speedup gegenüber der Flash-Linear-Attention-Baseline auf H20-GPUs und fungiert als Drop-in-Backend für flash-linear-attention.</p> <p><a href="https://arint.info/@Arint/116446367301746433">mehr</a> auf <a href="https://arint.info/">Arint.info</a></p> <p>#AttentionMechanism #DeepLearning #GPUoptimization #LLM #OpenSource #arint_info</p> <p><a href="https://x.com/Kimi_Moonshot/status/2046607915424034839#m">https://x.com/Kimi_Moonshot/status/2046607915424034839#m</a></p>

Github Awesome (@GithubAwesome)

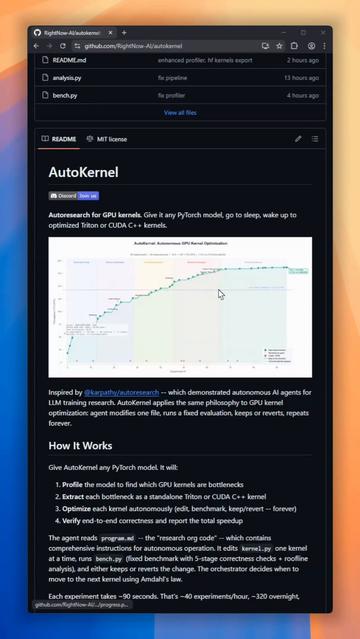

AutoKernel은 GPU 프로파일링과 커널 최적화 작업을 자동화하는 도구로, Andrej Karpathy의 autoresearch에서 영감을 받아 개발된 자율 에이전트를 사용합니다. 사용자가 PyTorch 모델을 지정하면 백그라운드에서 Triton 커널을 자동으로 최적화해 주므로 모델 개발자가 수동으로 프로파일을 관찰·조정하는 시간을 크게 절약할 수 있습니다.

Github Awesome (@GithubAwesome) on X

Building AI models and tired of staring at GPU profilers? AutoKernel does it for you. Inspired by Karpathy's autoresearch, it brings autonomous AI agents to GPU kernel optimization. Point it at any PyTorch model, go to sleep, and wake up to optimized Triton kernels. It

The Hidden Engineering Behind Fast AI: How LLM Inference Actually Works

https://techlife.blog/posts/llm-inference-optimization/

#LLM #Inference #PagedAttention #vLLM #FlashAttention #SpeculativeDecoding #MachineLearning #GPUOptimization #KVCache

Just rendered an image at 944×1152 (slightly above 1024×1024) using Flux1-Schnell-FP8 on my 6700 XT, and it works! (Image 1 is the Real-ESRGAN 2× upscaled version)

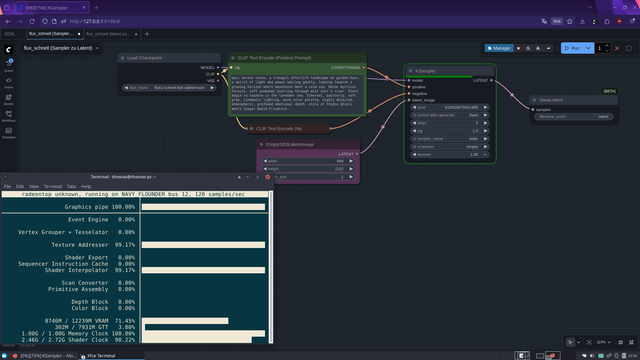

Workflow 1: Sampling (Image 2)

Prompt executed → UNet generates the latent

Step 1 (model load + latent generation) took 419 seconds

Output: Latent tensor saved to disk

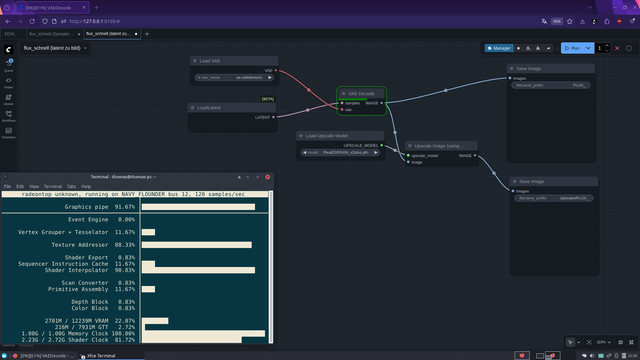

Workflow 2 : VAE Decode (Image 3)

Latent loaded → VAE decodes the image

Duration: 7.5 seconds

Advantage: UNet doesn’t need to stay in VRAM → VRAM freed, even on 12 GB GPUs

The problem with the stock LoadLatent Node

Dropdown only shows files if they were produced / annotated by a previous SaveLatent Node

Node is designed to pass latents inside a graph, not load arbitrary files from disk

Purpose: prevents accidentally loading wrong files

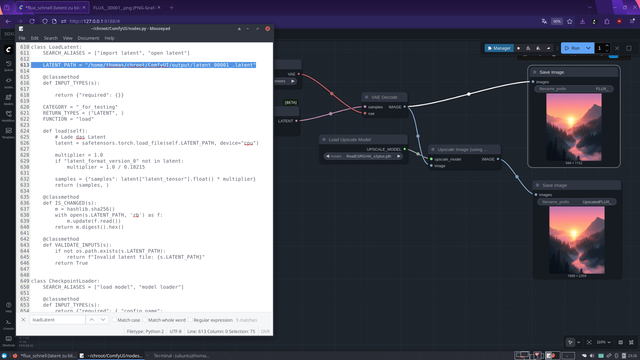

Workaround (Image 4)

Edited /ComfyUI/nodes.py, class LoadLatent

Hardcoded latent path → Node now loads directly from disk

Result: Workflow 2 runs instantly, UNet can be unloaded

Timing

Step 1 (model load + latent generation): 419 s

Step 2 (VAE decode): 7.5 s

Result: High-res images on a 12 GB RDNA2 GPU are now possible on Flux1-Schnell-FP8 without ComfyUI crashing! (Image 5 is the original output)

This might actually become my new Flux workflow: render quick 512×512 previews first (which works perfectly on RDNA2 GPUs), sort out the good ones, extract the seed from the PNG metadata, and then re-render only the selected images with the same seed using the split workflow at higher resolutions. This way, high-resolution Flux1-Schnell-FP8 renders become possible on 12 GB RDNA2 GPUs D:

Question at the end: Has anyone ever done this before? Because I have no clue xD

#ComfyUI #flux #Flux1SchnellFP8 #FP8 #AMD #RDNA2 #VAE #AIArt #Pixelfed #HighResolution #GPUOptimization #LatentWorkflow #AIWorkflow #AIHacks #RealESRGAN #Upscale #AIExperiment #CreativeAI #DigitalArt #AICommunity #python #linux #opensource #foss

⚡️ Tăng 90% PP/s nhưng TPS chỉ cải thiện 10–20% khi dùng 2 GPU (RTX Pro 6000 & 5090). Ai biết cách tối ưu giúp mình với? Đang chạy server AI để cung cấp dịch vụ nhanh! #AI #GPUOptimization #LlamaServer #MáyHọc #CôngNghệThôngTin

https://www.reddit.com/r/LocalLLaMA/comments/1qopgpp/llama_server_using_dual_gpus_pp_is_amazing_tps/

Khám phá mô hình AI phi2 của Microsoft, phù hợp để chạy trên PC với 12GB RAM + 3GB VRAM + GTX 1050 + Linux Mint. Phi2 được lượng tử hóa Q4K, tối ưu hiệu suất trên GPU trung bình. Thử tải về từ Hugging Face hoặc TheBloke và trải nghiệm mô hình AI phi-commercial này! #AIModel #Linux #TechVietnam #LocalLLaMA #Phi2 #GPUOptimization #AICommunity

Qwen3 Next 80B với 250k token context hoàn toàn chạy trên 1 GPU 7900 XTX (24 GB) tốc độ 41 tok/s. Sử dụng lượng tử hóa IQ2_XSS, Q4_0 KV & FA. Thay đổi lớn cho ứng dụng LLM trên 1 card duy nhất, khả năng xử lý code tuyệt vời. #Qwen3 #AILocal #GPUOptimization #LocalLLM #AIProgramming #MôHìnhHóaAI #LậpTrìnhViên

Công cụ 5060ti nâng cấp RAM (6000MHz) và Switch CUDA giúp tăng tốc độ{LLaMA} từ 22 t/s lên gần 37 t/s. Chi phí ~2200$, ít hơn 5090. #GPUoptimization #LLaMA #AI #tech #Performance #TốiMAXGPU #LLaMAtrong #Tètresjpg #nghiencoded #xuấtkho

Lenovo launches GPU Advanced Services, promising up to 30 percent faster AI performance

https://web.brid.gy/r/https://nerds.xyz/2025/09/lenovo-gpu-ai/