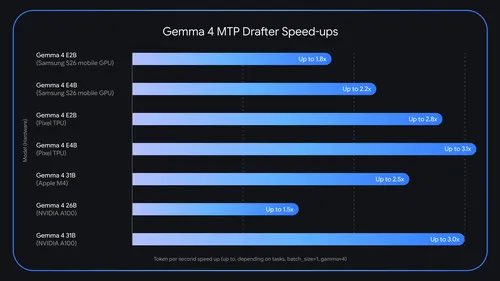

구글이 Gemma 4에 Multi-Token Prediction(MTP) drafters를 도입했습니다. 경량 드래프터가 여러 토큰을 추측하고 대상 모델이 병렬 검증해 최대 3배까지 추론 속도를 높이면서 출력 품질과 추론 논리는 유지됩니다. LiteRT-LM·MLX·vLLM·Hugging Face 등과 호환되며 Apache 2.0으로 공개·가중치 배포 중입니다.

https://blog.google/innovation-and-ai/technology/developers-tools/multi-token-prediction-gemma-4/