bstn (@bstnxbt)

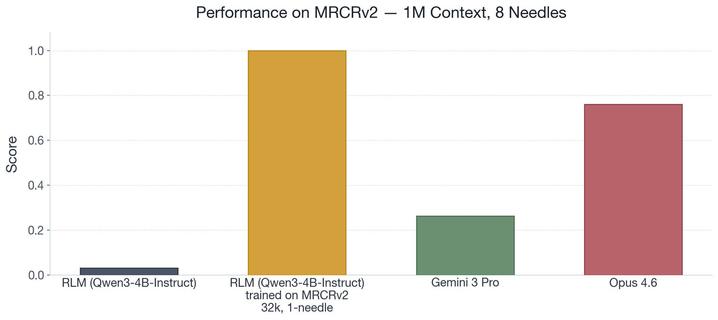

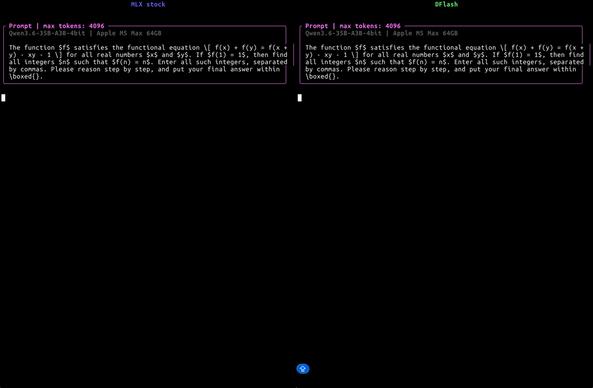

DFlash v0.1.4가 공개되었고, 양자화된 Qwen3 하이브리드 모델용 custom Metal verify kernel과 장문 컨텍스트에서의 피크 메모리 감소를 제공한다. M5 Max 환경에서 mlx_lm 기준 대비 토큰 처리 속도가 크게 향상되어, Apple 실리콘과 MLX 계열 추론 최적화에 중요한 개선으로 보인다.

bstn 👁️ (@bstnxbt) on X

DFlash v0.1.4 : custom Metal verify kernels for quantized Qwen3 hybrid models, plus significant peak memory reduction at long context. M5 Max 40-core GPU, 64GB, stock mlx_lm baseline: Qwen3.6-35B-A3B-4bit: ► @ 1024 · 138.3 → 300.3 tok/s (2.20x) ► @ 2048 · 135.6 → 246.4