Xfra AI

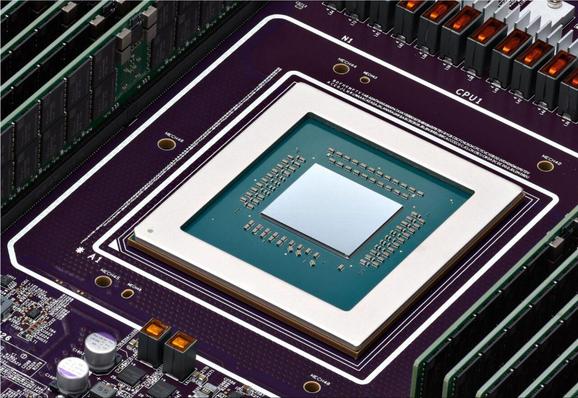

XFRA는 기존 인프라와 활용도가 낮은 전력 용량을 활용해 AI 추론 컴퓨팅 수요를 빠르고 저렴하게 충족하는 최초의 분산형 데이터센터입니다. 미국 데이터센터 전력 수요가 2028년까지 74GW에 달할 것으로 예상되는 가운데, 전력 인프라 병목 문제를 해결하기 위해 SPAN의 스마트 전기 패널과 결합된 XFRA 노드를 통해 전력 여유 공간을 활용합니다. 각 노드는 고성능 GPU와 CPU, 대용량 메모리, 액체 냉각, 배터리 백업을 갖추고 있으며, XSOL 오케스트레이션 레이어를 통해 기가와트 규모의 분산 AI 추론 클라우드를 구성합니다. 이는 AI 인프라 확장과 에너지 효율성 측면에서 혁신적인 접근법입니다.

#distributedcomputing #aiinference #datacenter #powermanagement #span