Hark raised $700 million Series A with $6 billion valuation in May 2026

#aihardware #startupfunding #multimodalai #seriesafunding #personalaiassistant

Hark raised $700 million Series A with $6 billion valuation in May 2026

#aihardware #startupfunding #multimodalai #seriesafunding #personalaiassistant

ASUS (@ASUS)

ASUS ExpertCenter Pro ET900NG3에 NVIDIA GB300 Grace Blackwell Ultra Desktop Superchip과 748GB coherent memory를 탑재해 20 PFLOPS의 AI 성능을 제공한다고 소개했다. NVIDIA AI Enterprise 및 NemoClaw와 호환되는 고성능 AI 워크스테이션/서버 성격의 하드웨어다.

COMPLEXITIES OF AI HARDWARE UNPACKED AMIDST GROWING COMMUNITY EFFORTS

AI engineers learn about GPU, CUDA, and PyTorch optimization from a new book and meetups in Washington D.C. and Munich. Costs may change.

#AIPerformance, #GPUOptimization, #CUDA, #PyTorch, #AIHardware

https://newsletter.tf/ai-hardware-performance-book-meetups-tips/

A new book and meetups in Washington D.C. and Munich are helping AI engineers understand complex hardware like GPUs and CUDA. This knowledge can help lower costs for AI development.

#AIPerformance, #GPUOptimization, #CUDA, #PyTorch, #AIHardware

https://newsletter.tf/ai-hardware-performance-book-meetups-tips/

Was my $48K GPU server worth it?

https://rosmine.ai/2026/05/13/was-my-48k-gpu-worth-it/

#HackerNews #GPUserver #WorthIt #TechInvestment #AIHardware #CostAnalysis

OWC Stack AI brings Thunderbolt 5 local AI support to Windows and Linux

https://fed.brid.gy/r/https://nerds.xyz/2026/05/owc-stack-ai-thunderbolt-5/

Engadget (@engadget)

AMD가 NVIDIA DGX Spark에 정면으로 대응하는 $3,999 Ryzen AI Halo PC를 공개했다. AI 워크스테이션/엣지 추론 시장에서 NVIDIA 대안 포지셔닝을 강화하는 움직임이다.

Natalie Fratto (@NatalieFratto)

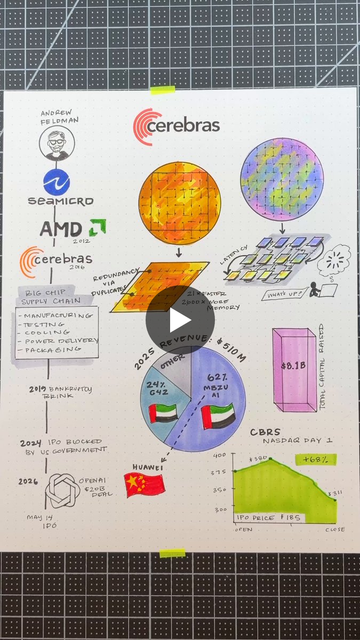

세라브라스(Cerebras)가 실리콘 웨이퍼를 수백 개의 작은 칩으로 쪼개던 기존 방식의 한계를 넘어, 결함을 피하는 방식으로 칩 설계를 혁신해왔다는 맥락을 설명하는 트윗입니다. 최근 IPO 규모도 언급돼 AI 칩 인프라 업체로서의 존재감이 강조됩니다.

For the last 70 years, we've been dicing up silicon wafers into hundreds of tiny chips. We had to because it was the only way to cut out the defects inside the silicon. @cerebras, which IPOed last week (the biggest tech IPO this year so far in terms of capital raised), found an