https://unsloth.ai/blog/nvidia-collab #GPUs #LLMTraining #TechNews #HackerNews #ngated

https://unsloth.ai/blog/nvidia-collab #GPUs #LLMTraining #TechNews #HackerNews #ngated

How Unsloth and Nvidia made LLM training 25% faster on consumer GPUs

https://unsloth.ai/blog/nvidia-collab

#HackerNews #Unsloth #Nvidia #LLMtraining #ConsumerGPUs #AItechnology

Unsloth AI (@UnslothAI)

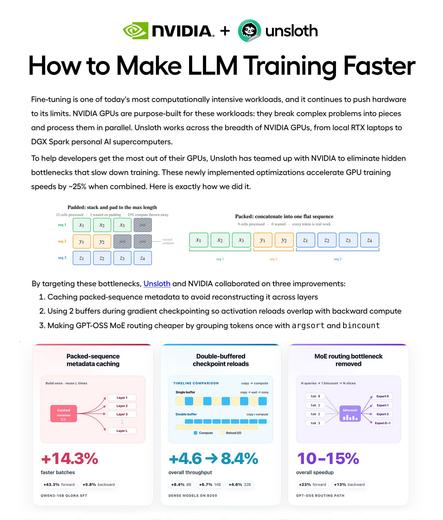

NVIDIA와 협업해 LLM 학습 속도를 약 25% 높이는 방법을 공개했다. Packed-sequence 메타데이터 캐싱, 더블 버퍼 체크포인트 재로드, 더 빠른 MoE 라우팅 등 3가지 최적화로 홈 GPU에서도 더 빠르게 모델을 학습하는 가이드를 제공한다.

Unsloth AI (@UnslothAI) on X

We collaborated with NVIDIA to teach you how we made LLM training ~25% faster! 🚀 Learn how 3 optimizations help your home GPU train models faster: 1. Packed-sequence metadata caching 2. Double-buffered checkpoint reloads 3. Faster MoE routing Guide: https://t.co/nwvVfNC8XE

JMoon (@Jmoon_174)

RLVR와 process reward models가 정답 여부뿐 아니라 중간 추론 단계에 보상을 주어, 단순 패턴 매칭이 아니라 실제 추론 능력을 학습시키는 핵심 방법이라는 설명이다. AI 추론 학습 연구의 중요한 기술적 통찰로 볼 수 있다.

https://x.com/Jmoon_174/status/2050592670964412618

#rlvr #processrewardmodel #reasoning #llmtraining #airesearch

I asked Reddit AI for hidden gems in Switzerland 🇨🇭 .

Here's what it suggested:

Marmot Wrestling Rings in Valais: A quirky and unique experience.

Aromat Mines Under Olten: A peculiar and interesting visit.

Cheese Wars in the South: Witness a 100kg wheel of Emmental launched by a trebuchet

Yes, I think they trained their LLM with posts from certain Swiss subreddits...

ASUS ExpertCenter Pro ET900N G3 brings NVIDIA Grace Blackwell Ultra AI supercomputing power to the desktop

https://fed.brid.gy/r/https://nerds.xyz/2026/03/asus-expertcenter-pro-et900n-g3-ai-supercomputer/

Autoresearch_at_home – SETI_at_home but for LLM training

https://www.ensue-network.ai/autoresearch

#HackerNews #Autoresearch_at_home #SETI_at_home #LLMtraining #AIresearch #TechInnovation

RE: https://mastodon.social/@verge/116204214756875751

“Each of these data companies touts its stable of pedigreed experts… Surge AI advertises its Supreme Court litigators, McKinsey principals, and platinum recording artists… Job listings seek chefs, management consultants, wildlife-conservation scientists, archivists, private investigators, police sergeants, reporters, teachers, and rental-counter clerks… It is, as one industry veteran put it, the largest harvesting of human expertise ever attempted.”

Snowflake's Arctic Long Sequence Training: How to Train LLMs on 15 Million Tokens Without Selling a Kidney

#ALST #Snowflake #LongContextTraining #DeepSpeed #HuggingFace #SequenceParallelism #LLMTraining #H100 #Llama8B #Qwen3 #GPUMemoryOptimization

Snowflake's Arctic Long Sequence Training: How to Train LLMs on 15 Million Tokens Without Selling a Kidney

Snowflake AI Research just open-sourced Arctic Long Sequence Training (ALST), a framework that pushes LLM training from a measly 32K tokens to over 15 million — a 469x improvement — using standard Hugging Face models and H100 GPUs. Here's what it means for you.