O que é Nvidia? Confira as áreas de atuação da empresa de GPUs

Boris Cherny (@bcherny)

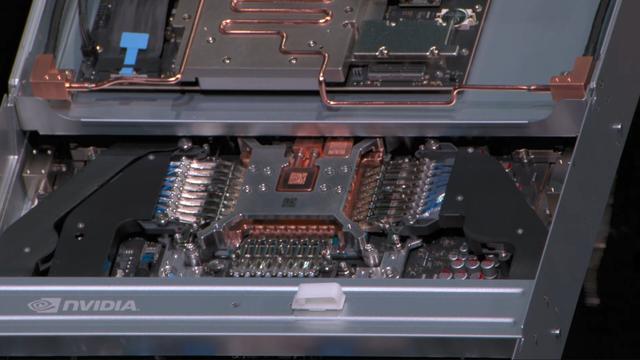

SpaceX와의 컴퓨트 파트너십을 발표하며 300MW 이상의 신규 용량과 한 달 내 22만 개의 NVIDIA GPU를 온라인에 투입한다고 밝혔다. 이 인프라는 Claude Pro와 Max를 구동하며, 대규모 AI 서비스 확장을 위한 중요한 하드웨어 투자다.

OpenAI (@OpenAI)

AMD, Broadcom, Intel, Microsoft, NVIDIA와 함께 대규모 AI 학습 클러스터의 속도와 안정성을 높이는 새로운 오픈 네트워킹 프로토콜 MRC(Multipath Reliable Connection)를 공개했다. GPU 유휴 시간을 줄여 더 효율적인 분산 학습을 지원하는 점이 핵심이다.

OpenAI (@OpenAI) on X

We’ve partnered with @AMD, @Broadcom, @Intel, @Microsoft, and @NVIDIA, to release Multipath Reliable Connection (MRC), a new open networking protocol that helps large AI training clusters run faster and more reliably, with less wasted GPU time. https://t.co/AiV952AJXs

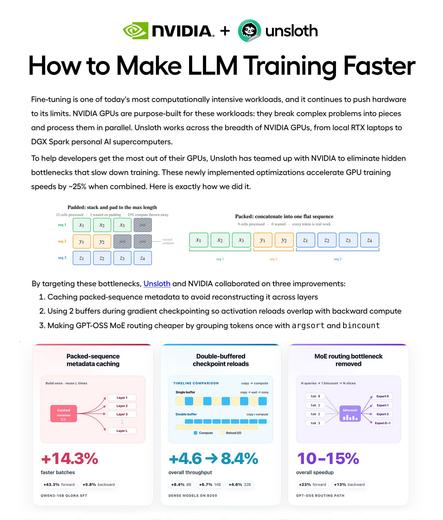

Unsloth AI (@UnslothAI)

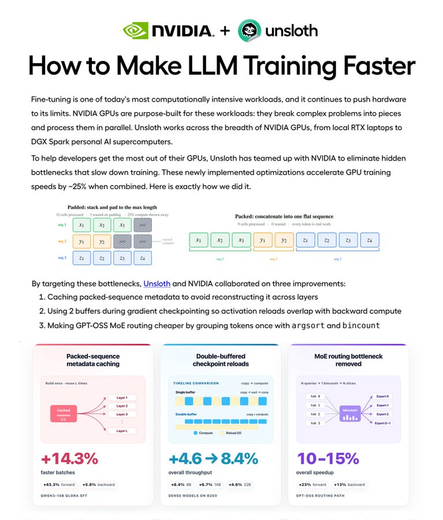

NVIDIA와 협업해 LLM 학습 속도를 약 25% 높이는 방법을 공개했다. Packed-sequence 메타데이터 캐싱, 더블 버퍼 체크포인트 재로드, 더 빠른 MoE 라우팅 등 3가지 최적화로 홈 GPU에서도 더 빠르게 모델을 학습하는 가이드를 제공한다.

Unsloth AI (@UnslothAI) on X

We collaborated with NVIDIA to teach you how we made LLM training ~25% faster! 🚀 Learn how 3 optimizations help your home GPU train models faster: 1. Packed-sequence metadata caching 2. Double-buffered checkpoint reloads 3. Faster MoE routing Guide: https://t.co/nwvVfNC8XE

NVIDIA and Corning want to build America’s AI backbone with new factories and thousands of jobs

https://fed.brid.gy/r/https://nerds.xyz/2026/05/nvidia-corning-ai-manufacturing/

Anthropic risks Claude backlash with Elon Musk SpaceX partnership

https://fed.brid.gy/r/https://nerds.xyz/2026/05/anthropic-claude-elon-musk-spacex/

NVIDIA Spectrum-X Ethernet MRC is the Custom RDMA Transport Protocol for Gigascale AI

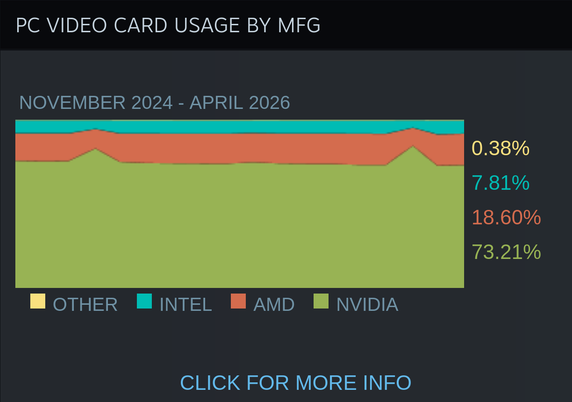

1 of 4 people that uses Steam doesn't play games or struggles with.

The RTX 5060 and 5060 Ti saw the most gains, as people slowly dispose their old computers with Intel iGPU and AMD graphics.

#Videogames #Gaming #Games #NVIDIA #RTX #AMD #Radeon #Steam #SteamHardwareSurvey #Valve #PC #PCGaming #PCGames #PCHardware #Hardware

Akshay (@akshay_pachaar)

NVIDIA와 Unsloth가 파인튜닝 속도를 25% 높이는 가이드를 공개했다. GPU 학습을 더 빠르게 만드는 핵심 최적화로 packed-sequence 메타데이터 캐싱과 더블 버퍼드 체크포인트 등 시스템 레벨 기법을 소개한다. AI 모델 학습 효율 개선에 유용한 실전 자료다.

Akshay 🚀 (@akshay_pachaar) on X

NVIDIA + Unsloth just dropped a guide on making fine-tuning 25% faster. this is hands-down the cleanest systems-level writeup i've read. you'll learn how 3 optimizations help your gpu train models faster: 1. packed-sequence metadata caching 2. double-buffered checkpoint