🚀 This Week in AI Research: Fastest-Growing Projects — April 17, 2026

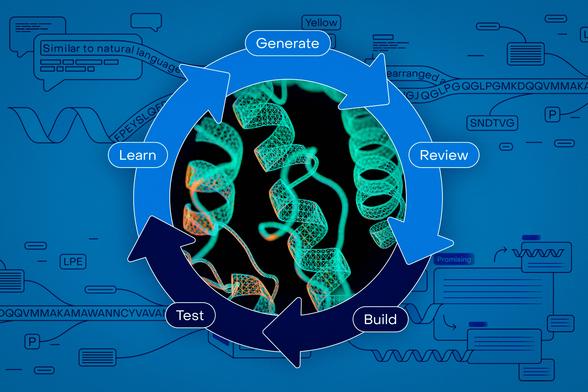

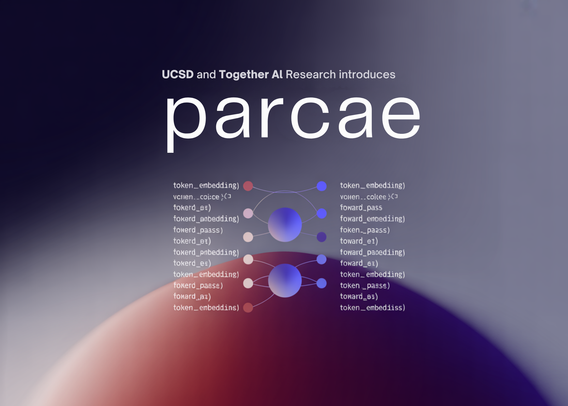

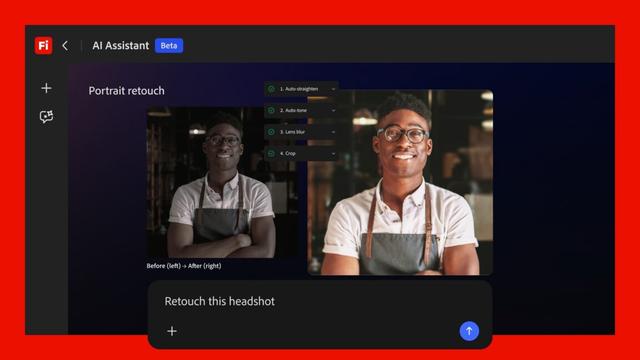

This week, the AI Research space on GitHub saw a surge in activity around machine learning primers, spatial research tools, and autonomous improvement loops. Researchers are increasingly turning to op...

Read full report → https://pullrepo.com/report/this-week-in-ai-research-fastest-growing-projects-april-17-2026