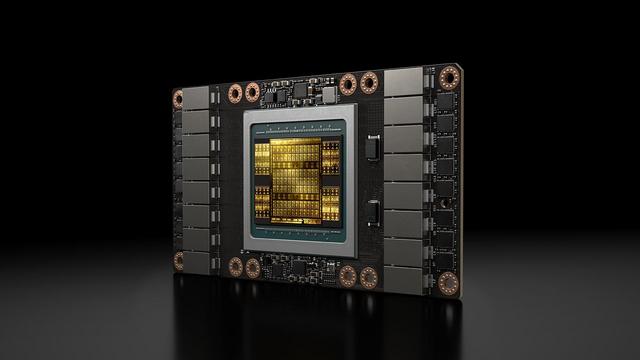

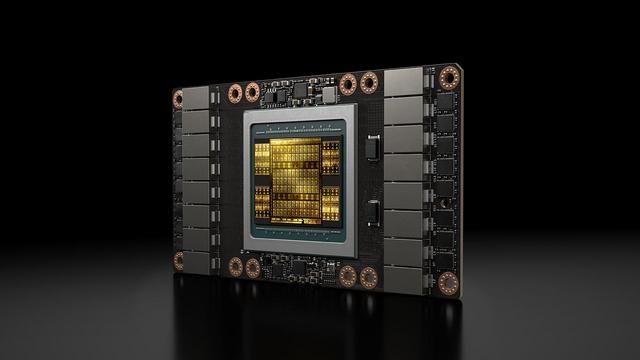

amd-instinct-miI355x-platform-brochure.pdf

https://www.amd.com/content/dam/amd/en/documents/instinct-tech-docs/product-briefs/amd-instinct-miI355x-platform-brochure.pdf

You will not get cheap #GPUs once #AI craze is gone.

These are not runnable by you. (Well most of you)

AMD is reportedly developing an entry-level RDNA 4 GPU with 8GB of VRAM — RX 9050 rumored to debut with 2048 cores, more than RX 9060

NVIDIA CEO Jensen Huang tells graduates to embrace AI despite fears it could replace them

https://fed.brid.gy/r/https://nerds.xyz/2026/05/nvidia-jensen-huang-ai-graduates/

https://winbuzzer.com/2026/05/11/micron-memory-bottlenecks-threaten-ai-inference-efficiency-xcxwbn/

Micron's Jeremy Wernersays memory limits are becoming the constraint that can keep expensive data-center GPUs from running AI inference efficiently.

#AI #AIInference #Micron #AIInfrastructure #AICompute #AIChips #AIHardware #GPUs #HBMy#DataCenters #JeremyWerner

https://winbuzzer.com/2026/05/11/enterprises-face-underused-gpu-fleets-as-ai-costs-rise-xcxwbn/

Enterprise AI buyers are hitting a new cost wall as reported GPU utilization stays near 5% even while infrastructure spending keeps rising.

#AI #AIInfrastructure #GPUs #AIInference #AICompute #EnterpriseAI #DataCenters #AIInvestment #Nvidia

$200 'socketed' Nvidia AI GPU for servers hacked into a PCIe card with custom PCB and 3D-printed cooling — modded Tesla V100 SMX data center GPU runs AI LLMs and is more efficient than many modern midrange offerings in AI inference

Testing Nvidia's RTX Mega Geometry tech — VRAM-reducing tech a leap forward for path-traced rendering

NB: Its not to imply that #gpus and accelerated computing (vectorising matrix-multiply) is incompatible with a human-centric digital landscape.

#Algorithms are powerful stuff, that is why they need to be in the service and control of society, or as the cliche goes: empowering all individuals, not just the #techbros

This constrains the manner in which algorithms are developed and deployed, and ultimately what kind of silicon we need.

3/