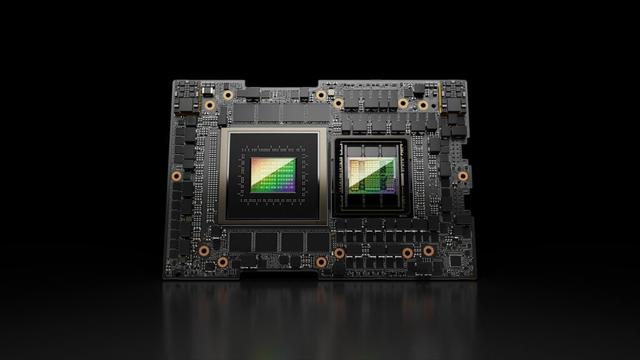

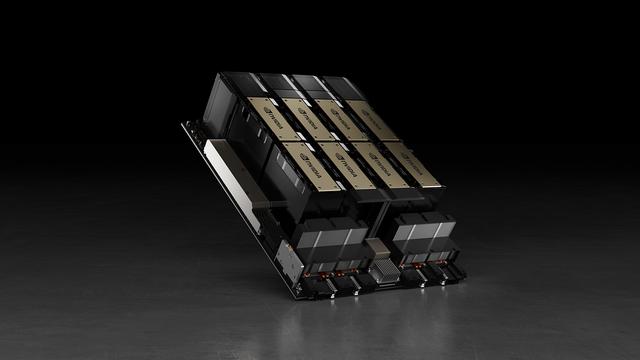

RT @0xSero: In den letzten 6 Monaten ist es zunehmend schwieriger geworden, H100/H200 GPUs zu bekommen. Ich habe seit 2-3 Monaten keine GPU-Pod mehr gesehen. Anjney Midha (@AnjneyMidha) teilt dies offenbar nicht jeder, also teile ich es hier, da Januar 2026: GPU-Mietpreise sind um 2x+ gestiegen. Wir erleben die "Corona der Rechenleistung", und das ganze Toilettenpapier ist weg. Bleibt gesund, ihr Forschenden — https://nitter.net/AnjneyMidha/status/2058611711867801989#m

mehr auf Arint.info

#Forschung #GPU #H100 #KünstlicheIntelligenz #Rechenleistung #arint_info