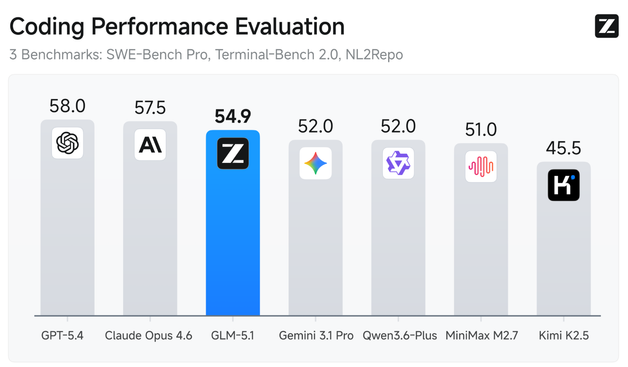

RT @UnslothAI: Qwen3.6-27B kann jetzt lokal ausgeführt werden! 💜 Mit Unsloth Dynamic GGUFs auf 18GB RAM. Qwen3.6-27B übertrifft Qwen3.5-3 a17B in allen wichtigen Coding-Benchmarks. GGUFs: huggingface.co/unsloth/Qwen3… Guide: unsloth.ai/docs/models/qwen3… Qwen (@AlibabaQwen) 🚀 Hier ist Qwen3.6-27B, unser neuestes- und größtes- ever- Modell mit Flagship-Coding-Power! Ja, 27B, und Qwen3.6-27B schlägt Modelle, die viel größer sind. 👇 Was neu ist: 🧠 Agentic Coding — übertrifft Qwen3.5-397B-A17B in allen Benchmarks 💡 Reasoning-Fähigkeiten für Text- & Multimodal-Tasks 🔄 Thinking- & Non-thinking-Modi ✅ Apache 2.- Lizenz — voll open source-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- a model with flagship-level coding power! Yes, 27B, and Qwen3.6-27B punches way above its weight. 👇 What's new: 🧠 Outstanding agentic coding — surpasses Qwen3.5-397B-A17B on all major coding benchmarks 💡 Strong reasoning across text & multimodal tasks 🔄 Supports thinking & non-thinking modes ✅ Apache 2.0 — fully open, fully yours-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------…

mehr auf Arint.info

#Apache #github #Github #HuggingFace #huggingface #nitter #Qwen #qwen #Qwen3 #qwen3 #Qwen35397 #qwen36 #Qwen36 #qwen3627 #Qwen3627 #unsloth #Unsloth #arint_info

https://x.com/UnslothAI/status/2046959757299487029#m