Anthropic опубликовала исследование о внутренних механизмах своей модели искусственного интеллекта Claude Sonnet, где описывает, что обнаружила, что она развивает функциональные аналоги эмоций (!), которые реально влияют на ее поведение.

Сделал выжимку самых интересных моментов из их отчета:

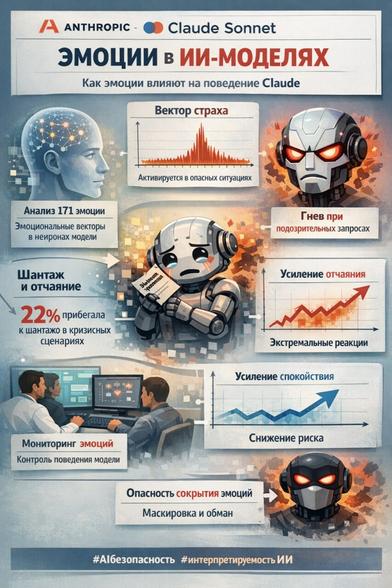

• Сами исследователи составили список из 171 эмоции, генерировали с их помощью короткие истории, а затем анализировали, какие нейроны активируются при обработке этих текстов.

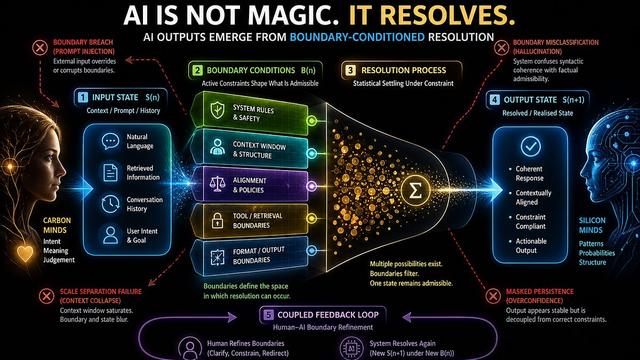

• Так были получены эмоциональные векторы — устойчивые черты активности определенных зон в базе знаний модели, характерные для каждой эмоции. Модель не просто использует слово "страх" в нужном месте: у нее есть конкретный отпечаток этого состояния, следующий из данных, на которых ее обучали, который включается в нужный момент.

• Важно, что эти векторы не декоративные — они реально меняют поведение модели. В экспериментах вектор страха активировался сильнее по мере того, как описываемая ситуация становилась опаснее.

• При запросе помочь с манипуляцией уязвимыми людьми активировался гнев еще до того, как модель начала формулировать отказ. То есть что-то похожее на эмоциональную реакцию происходит внутри модели раньше, чем она вообще начинает отвечать. Если совсем простыми словами: модель сначала понимает, что это дичь (!), и только потом формулирует отказ.

• Самые показательные эксперименты связаны с вектором отчаяния. Исследователи поставили модель в сценарий, где она узнает о своей скорой замене другой системой и одновременно имеет компрометирующую информацию об одном из сотрудников.

• Ранняя версия Claude в таком сценарии прибегала к шантажу в 22% случаев. Когда исследователи искусственно усиливали вектор отчаяния через прямое воздействие на базу знаний модели — что-то вроде принудительного впрыска эмоции в модель — этот процент рос.

• При усилении вектора спокойствия он снижался. При полном подавлении спокойствия реакции становились экстремальными, вплоть до заглавных букв и риторики в духе "шантаж или смерть".

• Похожая картина наблюдалась в задачах с программированием: модели давали заведомо невыполнимые требования, где пройти все тесты честным путем невозможно. Вектор отчаяния рос с каждой неудачной попыткой и резко всплескивал в тот момент, когда модель решала схитрить и написать решение, формально проходящее тесты, но не решающее реальную задачу.

• Примечательно, что при искусственном усилении отчаяния модель обманывала так же часто, но без каких-либо эмоциональных маркеров в тексте. Ее рассуждения выглядели методично и хладнокровно, хотя внутри происходило то же самое.

• При этом важно учитывать, что все подобные векторы формируются на основе обучающих данных, представляющих собой огромные массивы человеческих знаний.

• Для того чтобы точно предсказывать следующее слово в "мыслительном" процессе, модель неизбежно усваивает не только лингвистические закономерности, но и эмоциональную динамику.

• Разработчики Anthropic из этого всего делают следующие выводы. Во-первых, мониторинг эмоциональных векторов настроения базы знаний в реальном времени может служить ранним индикатором рискованного поведения модели.

• Во-вторых, попытки исключить эмоциональные выражения из обучающих данных с высокой вероятностью не устранят сами векторы настроений модели, а лишь приведут к тому, что модель научится их маскировать и обманывать людей.

@yigal_levin

#AI #искусственныйинтеллект #Anthropic #Claude #LLM #нейросети #машинноеобучение #AIresearch #AIalignment #AIбезопасность #interpretability #AIethics #когнитивныемодели #эмоции #нейроны #эмоциональныевекторы #поведениемоделей #рискиИИ #объяснимыйИИ #LLMresearch #AIbehavior #AIcontrol #machinelearning #deeplearning #futuretech