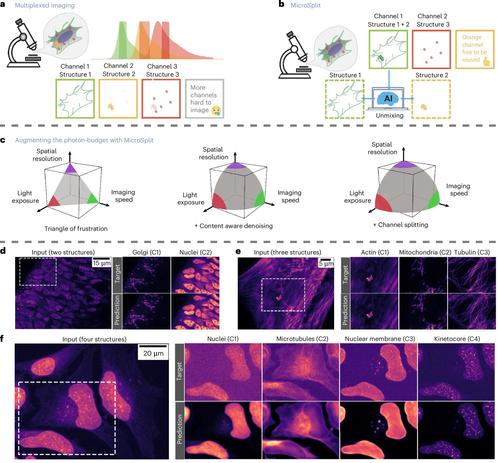

🔬 New #SpectralUnmixing paper by Ashesh et al. ( @florianjug): A #DeepLearning-based framework #MicroSplit for #FluorescenceMicroscopy that separates highly overlapping #fluorophore signals directly from multiplexed #ImagingData. Improves signal separation, reduces crosstalk, & enables more accurate multi-channel #imaging w/o requiring extensive reference measurements. Can’t wait to try this out on our in vivo #2P/ #3P imaging data:

Now we learn to save and load your trained model.

https://elonlit.com/scrivings/a-theory-of-deep-learning/ #deepLearning #absurdity #AIhumor #machineIntelligence #techIrony #HackerNews #ngated

A Theory of Deep Learning

Recent @DSLC club meetings:

:Python: Deep Learning with Python (3e): Image segmentation https://youtu.be/5VMR5NmsTKI #PyData #DeepLearning #AI

From the @DSLC  chives:

chives:

R para Ciencia de Datos Club de Lectura: Capítulo 5 https://youtu.be/N4V2NL-TTg8 #RStats

R para Ciencia de Datos Club de Lectura: Capítulo 5 https://youtu.be/N4V2NL-TTg8 #RStats

Fundamentals of Numerical Computation: Krylov methods in linear algebra https://youtu.be/3S1m0SIQZOY #JuliaLang

Fundamentals of Numerical Computation: Krylov methods in linear algebra https://youtu.be/3S1m0SIQZOY #JuliaLang

Support the Data Science Learning Community at https://patreon.com/DSLC

Deep Learning with Python (3e): Image segmentation (deeppy01 11)

Hackable PyTorch RL Library with Distributional Algorithms (D4PG, DSAC, DPPO)

e3rl은 PyTorch 기반의 강화학습(RL) 라이브러리로, GPU 완전 활용을 목표로 설계되었으며 D4PG, DSAC, DPPO 등 분포적 강화학습 알고리즘을 포함한다. CUDA, Apple Silicon(MPS), CPU를 지원하며, 다양한 gym 환경에서 쉽게 실험할 수 있도록 예제와 하이퍼파라미터를 제공한다. 연구 및 개발자들이 분포적 강화학습을 빠르게 적용하고 실험할 수 있는 오픈소스 도구로 활용 가능하다.

https://github.com/e3ntity/e3rl

#reinforcementlearning #pytorch #distributionalrl #gpu #deeplearning

Elon Litman (@elon_lit)

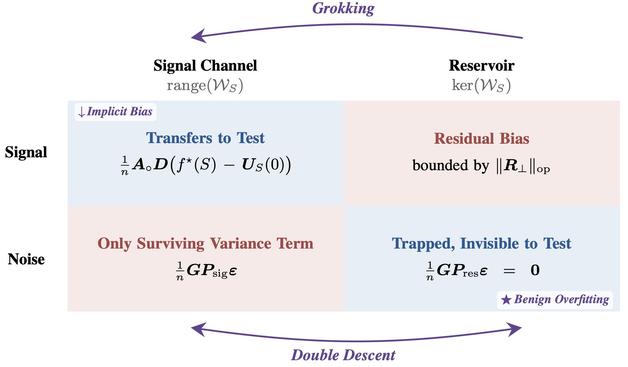

딥러닝의 일반화에 대한 통합 이론을 제시하며 grokking, double descent, benign overfitting, implicit bias를 하나의 틀로 설명하는 연구 결과다. 신경망의 population risk 최적화가 작은 변화로 귀결된다는 점을 제안해 이론적으로 중요한 발견이다.

https://x.com/elon_lit/status/2051713061036167253

#deeplearning #generalization #research #neuralnetworks #machinelearning

Elon Litman (@elon_lit) on X

We developed a unified theory of generalization in deep learning. It explains grokking, double descent, benign overfitting, and implicit bias. But theory is only half the story. It turns out that optimizing the population risk of any neural network amounts to a small change to

Build tiny models fast, minimize loss on FineWeb under limits.

RT @Michaelzsguo: Nutzer veröffentlichen Qwen 3.6-Konfigurationen, die mit nur 12 GB VRAM eine hohe Transaktionsrate (TPS) erreichen. Wer die Bedeutung der dafür verwendeten Parameter versteht, kann das zugrundeliegende Prinzip nachvollziehen.

mehr auf Arint.info

#AI #DataScience #DeepLearning #MachineLearning #Qwen3 #TechTips #arint_info

Arint - SEO+KI (@[email protected])

<p>RT @Michaelzsguo: Nutzer veröffentlichen Qwen 3.6-Konfigurationen, die mit nur 12 GB VRAM eine hohe Transaktionsrate (TPS) erreichen. Wer die Bedeutung der dafür verwendeten Parameter versteht, kann das zugrundeliegende Prinzip nachvollziehen.</p> <p><a href="https://arint.info/@Arint/116521398451439397">mehr</a> auf <a href="https://arint.info/">Arint.info</a></p> <p>#AI #DataScience #DeepLearning #MachineLearning #Qwen3 #TechTips #arint_info</p> <p><a href="https://x.com/Michaelzsguo/status/2050380832007721213#m">https://x.com/Michaelzsguo/status/2050380832007721213#m</a></p>