Khóa học nhanh về Lịch sử Trí tuệ nhân tạo 🎧 [Tư vấn] Podcast này cung cấp bản tường thuật có cấu trúc rõ ràng về hành trình phát triển AI từ quá khứ đến nay. Đề xuất nghe từ đầu để trải nghiệm toàn diện! #HistoryOfAI #LịchSửTríTuệNhânTạo #Podcast #AI #TechHistory #LịchSửCôngNghệ

https://www.reddit.com/r/singularity/comments/1pny4ft/crash_course_on_history_of_ai/

Artificial Intelligence - Past, Present, Future: Prof. W. Eric Grimson

The average chess players of Bletchley Park and AI research in Britain

#HackerNews #averagechessplayers #BletchleyPark #AIresearch #Britain #historyofAI #chessandtech

Just revisited The Quest for Artificial Intelligence by Nils J. Nilsson — a brilliant walk through the history, breakthroughs & ethical crossroads of AI.

A must-read if you’re building or governing AI today.

https://ai.stanford.edu/~nilsson/QAI/qai.pdf

Building on the 90s, statistical n-gram language models, trained on vast text collections, became the backbone of NLP research. They fueled advancements in nearly all NLP techniques of the era, laying the groundwork for today's AI.

F. Jelinek (1997), Statistical Methods for Speech Recognition, MIT Press, Cambridge, MA

#NLP #LanguageModels #HistoryOfAI #TextProcessing #AI #historyofscience #ISE2025 @fizise @fiz_karlsruhe @tabea @enorouzi @sourisnumerique

Next stop in our NLP timeline are the (mostly) futile tries of machine translation during the cold war era. The rule-based machine translation approach was used mostly in the creation of dictionaries and grammar programs. It’s major drawback was that absolutely everything had to be made explicit.

#nlp #historyofscience #ise2025 #lecture #machinetranslation #coldwar #AI #historyofAI @tabea @enorouzi @sourisnumerique @fiz_karlsruhe @fizise

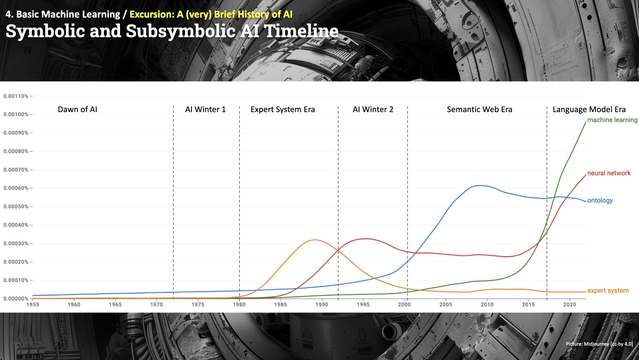

Summarizing our very brief #HistoryOfAI which was published here for several weeks in a series of toots , let's have a look at the popularity dynamics of symbolic vs subsymbolic AI put into perspective with historical AI hay-days and winters via the Google ngram viewer.

https://books.google.com/ngrams/graph?content=ontology%2Cneural+network%2Cmachine+learning%2Cexpert+system&year_start=1955&year_end=2022&corpus=en&smoothing=3&case_insensitive=false

#ISE2024 #AI #ontologies #machinelearning #neuralnetworks #llms @fizise @sourisnumerique @enorouzi #semanticweb #knowledgegraphs

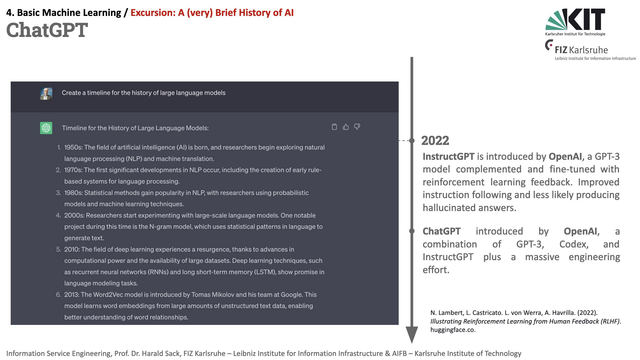

In 2022 with the advent of ChatGPT, large language models and AI in general gained an unprecedented popularity. It combined InstructGPT, a GPT-3 model complemented and fine-tuned with reinforcement learning feedback, Codex text2code, plus a massive engineering effort.

N. Lambert, et al. (2022). Illustrating Reinforcement Learning from Human Feedback (RLHF). https://huggingface.co/blog/rlhf

#HistoryOfAI #AI #ISE2024 @fizise @sourisnumerique @enorouzi #llm #gpt #llms

Higher, faster, farther... in 2021 Generative AI gains momentum with the advent of DaLL-E, a GPT-3 based zero-shot text2image model, and other major milestones, as e.g., GitHub CoPilot, Open AI Codex, WebGPT, and Google LaMDA.

Codex: Chen, M., et al. (2021). Evaluating Large Language Models Trained on Code, https://arxiv.org/abs/2107.03374

DaLL-E: Ramesh, A.et al. (2021). Zero-Shot Text-to-Image Generation, https://arxiv.org/abs/2107.03374

#HistoryOfAI #AI #ISE2024 @fizise @sourisnumerique @enorouzi #llm #gpt

Evaluating Large Language Models Trained on Code

We introduce Codex, a GPT language model fine-tuned on publicly available code from GitHub, and study its Python code-writing capabilities. A distinct production version of Codex powers GitHub Copilot. On HumanEval, a new evaluation set we release to measure functional correctness for synthesizing programs from docstrings, our model solves 28.8% of the problems, while GPT-3 solves 0% and GPT-J solves 11.4%. Furthermore, we find that repeated sampling from the model is a surprisingly effective strategy for producing working solutions to difficult prompts. Using this method, we solve 70.2% of our problems with 100 samples per problem. Careful investigation of our model reveals its limitations, including difficulty with docstrings describing long chains of operations and with binding operations to variables. Finally, we discuss the potential broader impacts of deploying powerful code generation technologies, covering safety, security, and economics.