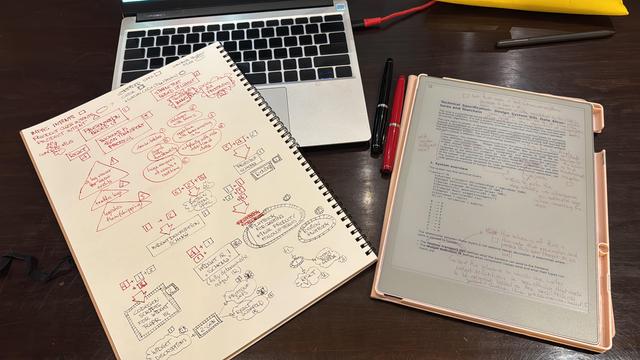

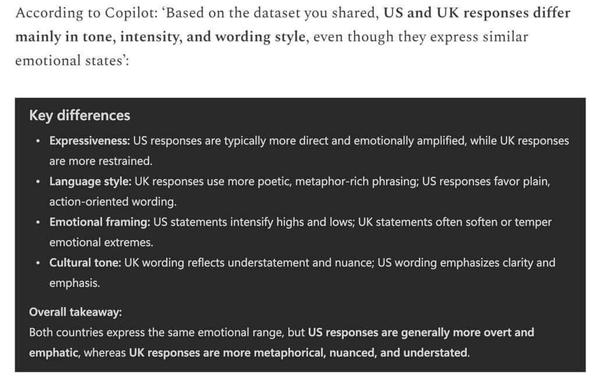

No money to get a second remarkable, so gotta do with paper again. Fun and also annoying, to be completely honest. But also, I realize how much I’ve depended on graphic design principles in my software work, colors, shapes, layout, texture, size have always had very precise technical meaning. Now it actually works as formal encoding without me really having to type very much, which is pretty awesome.

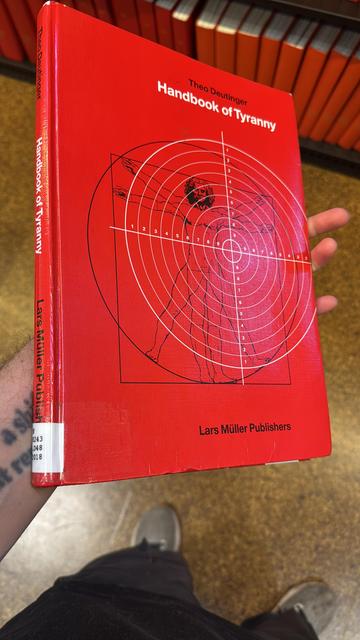

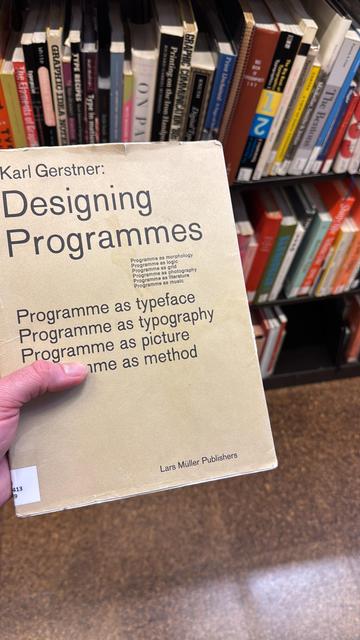

So might as well check out some new “software engineering manuals”.

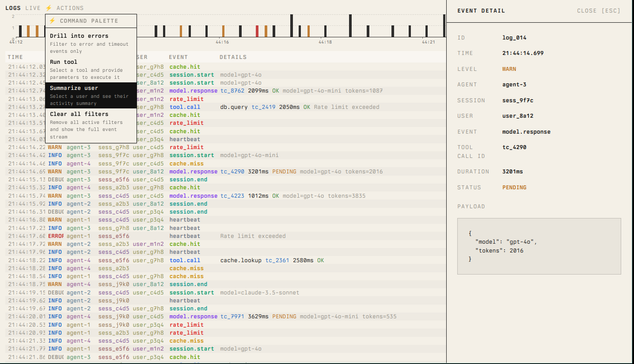

More concretely, I’m currently design a design system compiler toolkit which allows one to take problem domain “intent” and “compile them” (part deterministic compiler work, part llm fuzzy work) into varying intermediate representations. I can’t fully put it into words yet, and it looks insane, but this is to build CRUD websites for brick and mortar retail (amongst others). Weird times we live in.