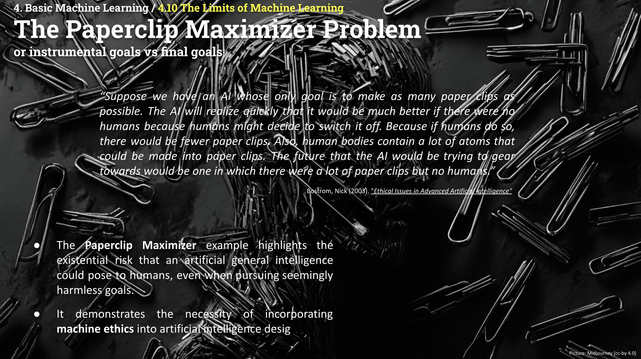

In the very last #ISE2024 lecture, we were discussing the limits of machine learning and #AI. The "Paperclip Maximizer Problem" is one of the dystopian scenarios that might occur if final goals and instrumental goals are diverging...

#dystopian #generativeAI #llms @fizise @fiz_karlsruhe #aiart