Anthropic (@AnthropicAI)

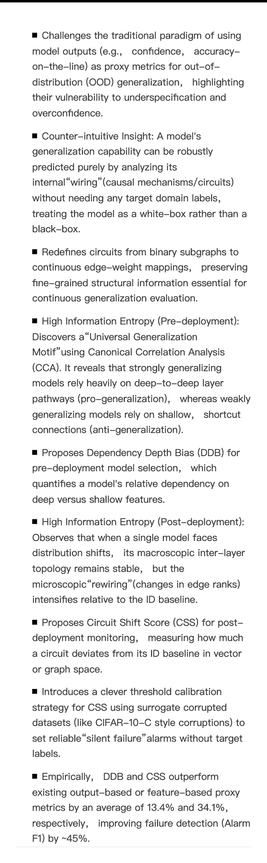

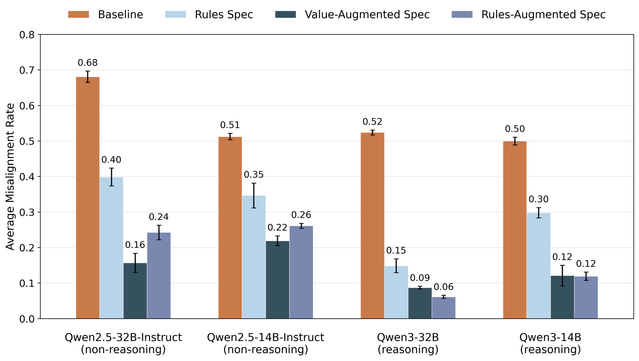

MSM을 활용하면 어떤 모델 스펙이나 헌법(constitution)이 정렬 학습에서 더 나은 일반화를 이끄는지 실증적으로 비교할 수 있다고 설명한다. 단순히 규칙만 주는 것보다, 그 규칙의 가치와 배경을 설명하거나 더 세부적인 하위 규칙을 추가하는 방식이 더 효과적일 수 있다는 점을 강조한다.

Anthropic (@AnthropicAI) on X

Using MSM, we can also empirically study which model specs or constitutions yield the best generalization from alignment training. Specifying rules works to some extent, but explaining the values underlying those rules (or adding more detailed subrules) is even better.