I've been working in #Pitxu these days.

- I left behind the Infinite-loop approach to a Callback-based one triggered by VAD, reducing about a 40% of the load and imilar battery life improvement.

- Added a Long Term Memory system, so that I can reduce the amount of context in use per session relaying on an external support, making it faster and cheaper.

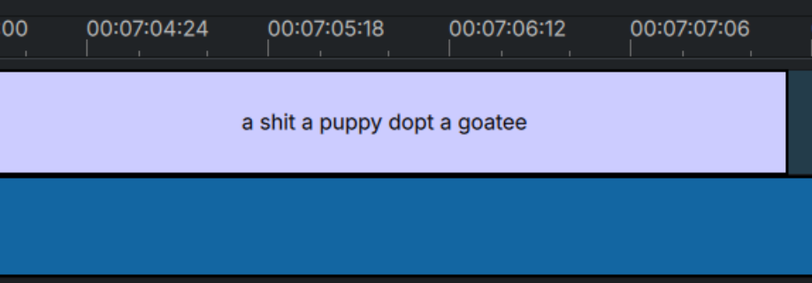

- I've switched #Vosk for #Whisper in the Speech-To-Text step, that brings an incredible improvement on the transcription, which at its turn improves the overall user experience.

- I switched from Gemini 2.5 Flash to Gemini 3.1 Flash-Lite, which improves quality but also penalizes reaction speed.

- I delegated some background work into a new Support process that takes it out from the main (user experience) thread.

- I corrected numerous visualization bugs that improves the user-Pitxu interaction.

All in all, I just had a long conversation with Pitxu, and has been by far the best demo I ever had in year and a half.

This is a self-tap-on-the-shoulder post, thank you for your attention 🙂