Final day at #EVS2026! 🚀

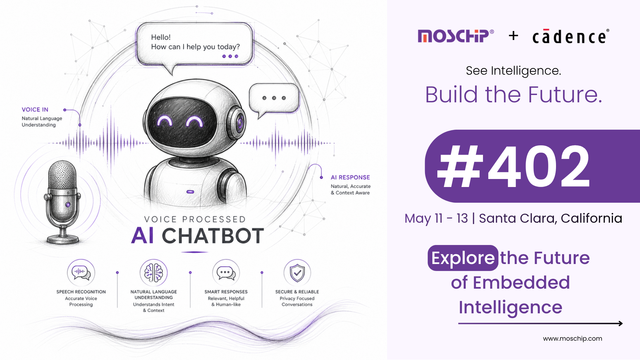

Don't miss @MosChip® at @cadence Booth #402. Experience our secure, Voice-Processed AI Chatbot demo featuring SLM and real-time speech recognition.

See you in Santa Clara!📍

#EmbeddedAI #EdgeAI #AI #VoiceAI #EmbeddedVisionSummit #EmbeddedSystems