Effective hyperparameter tuning involves systematic exploration and optimization to find configurations that yield the best results for a given dataset and task[..]

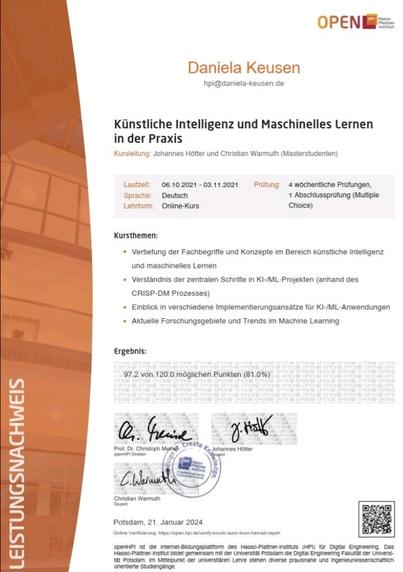

#KünstlicheIntelligenz und #MaschinellesLernen in der Praxis

#MOOC im Rahmen der #KIwinterschool auf #openHPI @Hasso_Plattner_Institute

#KI-/ #ML-Projekte anhand #CRISP-#DM Prozess

#MachineLearning

#Python #Programmierbibliotheken #Datenvisualisierung

#RecommenderSysteme #Datenvorverarbeitung

#Datenformate #Datenrepräsentationen #Hyperparameter #SentimentAnalyse #Datenlabeling #LargeScaleLanguageModels

#ComputerVision #Datenbeschaffung #Explainability #Bias

🧐 Künstlich Intelligent lernen 💻 – Theresa Eimer beschäftigt sich als Informatikerin damit, wie man künstlich intelligent lernen kann. Besonders wichtig dabei: Hyperparameter. Doch wie funktioniert maschinelles Lernen überhaupt?🎓

Hier gehts zum Vortrag: https://youtu.be/UQ4y5ovvp2Y

#forschung #wissenschaft #scienceslam #science #lernen #study #AI #informatik #maschinelleslernen #Hyperparameter #künstlicheintelligenz #daten #datenkrake #blackbox

Wie du künstlich intelligentes Lernen richtig umsetzt (Theresa Eimer - Science Slam)

Sample Average Approximation for Black-Box Variational Inference

No More Pesky Hyperparameters: Offline Hyperparameter Tuning for RL

Han Wang, Archit Sakhadeo, Adam M White et al.

2/10) #SSL models show great promise and can learn #representations from large-scale unlabelled data. But, identifying the best model across diff #hyperparameter configs requires measuring downstream task performance, which requires #labels and adds to the #compute time+resources. 😕

I wonder if anyone has written about #ASHA #hyperparameter optimization vs restart strategies.

I've been playing around with ASHA in #ray on my CMA-ES optimization task. Turns out my hyperparameters (population_size, sigma_0) are almost uncorrelated to performance, but the final performance varies a lot per run.

So what ASHA actually does for me is just picking the lucky runs. Which helped a lot. Maybe I should try this BIPOP restarting strategy of CMA-ES instead.