https://daringfireball.net/2026/05/ai_is_technology_not_a_product #techwisdom #JohnGruber #insights #HackerNews #ngated

https://daringfireball.net/2026/05/ai_is_technology_not_a_product #techwisdom #JohnGruber #insights #HackerNews #ngated

https://arxiv.org/abs/2601.19897 #groundbreaking #revelation #continuous #learning #buzzwords #innovation #HackerNews #ngated

Self-Distillation Enables Continual Learning

Continual learning, enabling models to acquire new skills and knowledge without degrading existing capabilities, remains a fundamental challenge for foundation models. While on-policy reinforcement learning can reduce forgetting, it requires explicit reward functions that are often unavailable. Learning from expert demonstrations, the primary alternative, is dominated by supervised fine-tuning (SFT), which is inherently off-policy. We introduce Self-Distillation Fine-Tuning (SDFT), a simple method that enables on-policy learning directly from demonstrations. SDFT leverages in-context learning by using a demonstration-conditioned model as its own teacher, generating on-policy training signals that preserve prior capabilities while acquiring new skills. Across skill learning and knowledge acquisition tasks, SDFT consistently outperforms SFT, achieving higher new-task accuracy while substantially reducing catastrophic forgetting. In sequential learning experiments, SDFT enables a single model to accumulate multiple skills over time without performance regression, establishing on-policy distillation as a practical path to continual learning from demonstrations.

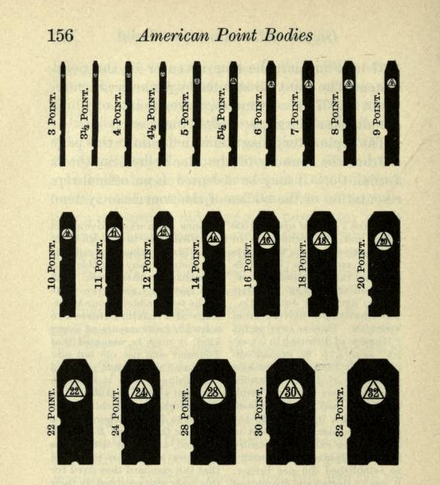

https://buttondown.com/hillelwayne/archive/points-are-a-weird-and-inconsistent-unit-of/ #Revelation #Consistency #Matters #MetricSystem #TechHumor #HackerNews #ngated

https://arxiv.org/abs/2605.12357 #Groundbreaking #Research #HackerNews #ngated

$δ$-mem: Efficient Online Memory for Large Language Models

Large language models increasingly need to accumulate and reuse historical information in long-term assistants and agent systems. Simply expanding the context window is costly and often fails to ensure effective context utilization. We propose $δ$-mem, a lightweight memory mechanism that augments a frozen full-attention backbone with a compact online state of associative memory. $δ$-mem compresses past information into a fixed-size state matrix updated by delta-rule learning, and uses its readout to generate low-rank corrections to the backbone's attention computation during generation. With only an $8\times8$ online memory state, $δ$-mem improves the average score to $1.10\times$ that of the frozen backbone and $1.15\times$ that of the strongest non-$δ$-mem memory baseline. It achieves larger gains on memory-heavy benchmarks, reaching $1.31\times$ on MemoryAgentBench and $1.20\times$ on LoCoMo, while largely preserving general capabilities. These results show that effective memory can be realized through a compact online state directly coupled with attention computation, without full fine-tuning, backbone replacement, or explicit context extension.

https://sciencedemonstrations.fas.harvard.edu/presentations/microscale-thermite-reaction #MicroscaleThermite #Reaction #Secrets #Research #HackerNews #ngated

https://greem.co.uk/otherbits/jelly.html #Experiment #Fruit #Revolution #HackerNews #ngated

https://www.arup.com/en-us/news/first-fehmarnbelt-tunnel-element-lowered/ #waterachievement #concreteinnovation #diggingdeeper #humanityprogress #HackerNews #ngated

https://blog.google/innovation-and-ai/technology/developers-tools/expanded-gemini-api-file-search-multimodal-rag/ #GeminiAPI #FileSearch #multimodal #technews #HackerNews #ngated

https://arxiv.org/abs/2604.15597 #Corruption #Groundbreaking #Discovery #TechNews #DocumentManagement #HackerNews #ngated

LLMs Corrupt Your Documents When You Delegate

Large Language Models (LLMs) are poised to disrupt knowledge work, with the emergence of delegated work as a new interaction paradigm (e.g., vibe coding). Delegation requires trust - the expectation that the LLM will faithfully execute the task without introducing errors into documents. We introduce DELEGATE-52 to study the readiness of AI systems in delegated workflows. DELEGATE-52 simulates long delegated workflows that require in-depth document editing across 52 professional domains, such as coding, crystallography, and music notation. Our large-scale experiment with 19 LLMs reveals that current models degrade documents during delegation: even frontier models (Gemini 3.1 Pro, Claude 4.6 Opus, GPT 5.4) corrupt an average of 25% of document content by the end of long workflows, with other models failing more severely. Additional experiments reveal that agentic tool use does not improve performance on DELEGATE-52, and that degradation severity is exacerbated by document size, length of interaction, or presence of distractor files. Our analysis shows that current LLMs are unreliable delegates: they introduce sparse but severe errors that silently corrupt documents, compounding over long interaction.

https://xeiaso.net/blog/2026/abstain-from-install/ #groundbreaking #software #installation #prophets #hacker #news #HackerNews #ngated