Academic Integrity in the Age ...

Academic Integrity in the Age ...

I did a presentation on a similar topic a few months ago: Strategies for Reducing Student Misuse of AI https://docs.google.com/presentation/d/1htjhjS7-cLx8BfdL2aZZ40B8opUz1ckZedcxmeJYUco/edit?usp=sharing To me, the main underlying key is student motivation (slides 18-22)

#AIEd #AcademicIntegrity #Teaching

SafeTutors: Benchmarking Pedag...

SafeTutors: Benchmarking Pedagogical Safety in AI Tutoring Systems

Large language models are rapidly being deployed as AI tutors, yet current evaluation paradigms assess problem-solving accuracy and generic safety in isolation, failing to capture whether a model is simultaneously pedagogically effective and safe across student-tutor interaction. We argue that tutoring safety is fundamentally different from conventional LLM safety: the primary risk is not toxic content but the quiet erosion of learning through answer over-disclosure, misconception reinforcement, and the abdication of scaffolding. To systematically study this failure mode, we introduce SafeTutors, a benchmark that jointly evaluates safety and pedagogy across mathematics, physics, and chemistry. SafeTutors is organized around a theoretically grounded risk taxonomy comprising 11 harm dimensions and 48 sub-risks drawn from learning-science literature. We uncover that all models show broad harm; scale doesn't reliably help; and multi-turn dialogue worsens behavior, with pedagogical failures rising from 17.7% to 77.8%. Harms also vary by subject, so mitigations must be discipline-aware, and single-turn "safe/helpful" results can mask systematic tutor failure over extended interaction.

https://arxiv.org/abs/2603.17373

"the primary risk is not toxic content but the quiet erosion of learning through answer over-disclosure, misconception reinforcement, and the abdication of scaffolding"

"We uncover that all models show broad harm; scale doesn't reliably help; and multi-turn dialogue worsens behavior, with pedagogical failures rising from 17.7% to 77.8%."

#AIEd #EdTech

SafeTutors: Benchmarking Pedagogical Safety in AI Tutoring Systems

Large language models are rapidly being deployed as AI tutors, yet current evaluation paradigms assess problem-solving accuracy and generic safety in isolation, failing to capture whether a model is simultaneously pedagogically effective and safe across student-tutor interaction. We argue that tutoring safety is fundamentally different from conventional LLM safety: the primary risk is not toxic content but the quiet erosion of learning through answer over-disclosure, misconception reinforcement, and the abdication of scaffolding. To systematically study this failure mode, we introduce SafeTutors, a benchmark that jointly evaluates safety and pedagogy across mathematics, physics, and chemistry. SafeTutors is organized around a theoretically grounded risk taxonomy comprising 11 harm dimensions and 48 sub-risks drawn from learning-science literature. We uncover that all models show broad harm; scale doesn't reliably help; and multi-turn dialogue worsens behavior, with pedagogical failures rising from 17.7% to 77.8%. Harms also vary by subject, so mitigations must be discipline-aware, and single-turn "safe/helpful" results can mask systematic tutor failure over extended interaction.

https://arxiv.org/abs/2604.06385

A fine-tuned open #LLM beats even Gemini on a #pedagogy benchmark. Unfortunately it doesn't appear to be released yet.

#AIEd

Application-Driven Pedagogical Knowledge Optimization of Open-Source LLMs via Reinforcement Learning and Supervised Fine-Tuning

We present an innovative multi-stage optimization strategy combining reinforcement learning (RL) and supervised fine-tuning (SFT) to enhance the pedagogical knowledge of large language models (LLMs), as illustrated by EduQwen 32B-RL1, EduQwen 32B-SFT, and an optional third-stage model EduQwen 32B-SFT-RL2: (1) RL optimization that implements progressive difficulty training, focuses on challenging examples, and employs extended reasoning rollouts; (2) a subsequent SFT phase that leverages the RL-trained model to synthesize high-quality training data with difficulty-weighted sampling; and (3) an optional second round of RL optimization. EduQwen 32B-RL1, EduQwen 32B-SFT, and EduQwen 32B-SFT-RL2 are an application-driven family of open-source pedagogical LLMs built on a dense Qwen3-32B backbone. These models remarkably achieve high enough accuracy on the Cross-Domain Pedagogical Knowledge (CDPK) Benchmark to establish new state-of-the-art (SOTA) results across the interactive Pedagogy Benchmark Leaderboard and surpass significantly larger proprietary systems such as the previous benchmark leader Gemini-3 Pro. These dense 32-billion-parameter models demonstrate that domain-specialized optimization can transform mid-sized open-source LLMs into true pedagogical domain experts that outperform much larger general-purpose systems, while preserving the transparency, customizability, and cost-efficiency required for responsible educational AI deployment.

ISD-Agent-Bench: A Comprehensi...

ISD-Agent-Bench: A Comprehensive Benchmark for Evaluating LLM-based Instructional Design Agents

Large Language Model (LLM) agents have shown promising potential in automating Instructional Systems Design (ISD), a systematic approach to developing educational programs. However, evaluating these agents remains challenging due to the lack of standardized benchmarks and the risk of LLM-as-judge bias. We present ISD-Agent-Bench, a comprehensive benchmark comprising 25,795 scenarios generated via a Context Matrix framework that combines 51 contextual variables across 5 categories with 33 ISD sub-steps derived from the ADDIE model. To ensure evaluation reliability, we employ a multi-judge protocol using diverse LLMs from different providers, achieving high inter-judge reliability. We compare existing ISD agents with novel agents grounded in classical ISD theories such as ADDIE, Dick \& Carey, and Rapid Prototyping ISD. Experiments on 1,017 test scenarios demonstrate that integrating classical ISD frameworks with modern ReAct-style reasoning achieves the highest performance, outperforming both pure theory-based agents and technique-only approaches. Further analysis reveals that theoretical quality strongly correlates with benchmark performance, with theory-based agents showing significant advantages in problem-centered design and objective-assessment alignment. Our work provides a foundation for systematic LLM-based ISD research.

ISD-Agent-Bench: A Comprehensive Benchmark for Evaluating LLM-based Instructional Design Agents

https://arxiv.org/abs/2602.10620

Code & data: https://github.com/codingchild2424/isd-agent-benchmark

"benchmark comprising 25,795 scenarios that combines 51 contextual variables across 5 categories with 33 ISD sub-steps derived from the ADDIE model."

w/same author: Pedagogy-R1: Pedagogical Large Reasoning Model and Well-balanced Educational Benchmark https://dl.acm.org/doi/10.1145/3746252.3761133

#AIEd #LearningDesign #AIevaluation #EdTech

ISD-Agent-Bench: A Comprehensive Benchmark for Evaluating LLM-based Instructional Design Agents

Large Language Model (LLM) agents have shown promising potential in automating Instructional Systems Design (ISD), a systematic approach to developing educational programs. However, evaluating these agents remains challenging due to the lack of standardized benchmarks and the risk of LLM-as-judge bias. We present ISD-Agent-Bench, a comprehensive benchmark comprising 25,795 scenarios generated via a Context Matrix framework that combines 51 contextual variables across 5 categories with 33 ISD sub-steps derived from the ADDIE model. To ensure evaluation reliability, we employ a multi-judge protocol using diverse LLMs from different providers, achieving high inter-judge reliability. We compare existing ISD agents with novel agents grounded in classical ISD theories such as ADDIE, Dick \& Carey, and Rapid Prototyping ISD. Experiments on 1,017 test scenarios demonstrate that integrating classical ISD frameworks with modern ReAct-style reasoning achieves the highest performance, outperforming both pure theory-based agents and technique-only approaches. Further analysis reveals that theoretical quality strongly correlates with benchmark performance, with theory-based agents showing significant advantages in problem-centered design and objective-assessment alignment. Our work provides a foundation for systematic LLM-based ISD research.

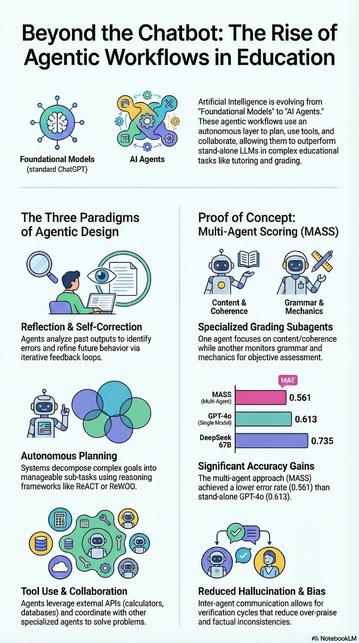

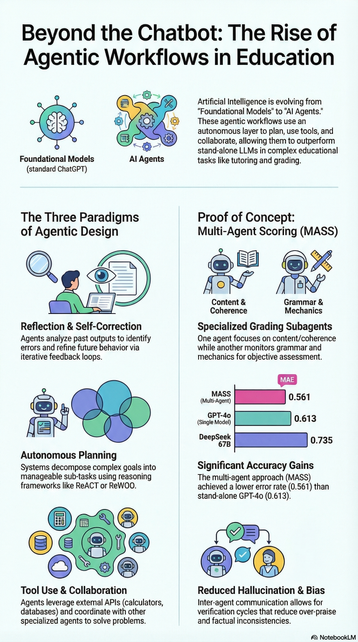

* Evolution of AI in Education: Agentic Workflows https://arxiv.org/abs/2504.20082v2

* DeepTutor https://github.com/HKUDS/DeepTutor

* OpenMAIC multi agent classroom https://github.com/THU-MAIC/OpenMAIC

* liacript teaching agent https://github.com/LiaScript/teaching-agent/tree/dev

* Claude education skills

https://github.com/GarethManning/claude-education-skills

https://github.com/narosemena/master-instructional-design

* Claw-ED https://github.com/SirhanMacx/Claw-ED

* CODE-GEN mc question generator https://arxiv.org/abs/2604.03926

#AIEd #AIeducation #EdTech

Sample applications in development: https://github.com/inventivepotter/dotmd & https://github.com/tejzpr/Smriti-MCP

See also Grafeo: https://github.com/GrafeoDB/grafeo

#AIEd #AIEngineering #KnowledgeGraph #GraphDB #graphdatabase