Intel killed Arc Celestial gaming GPUs, and said nothing. 🚨

The B580 has no successor. Druid is "up in the air."

Gamers got ghosted. AI got everything.

Full story 👇

https://geekrealmhub.com/intel-arc-celestial-gaming-gpu-cancelled/

Intel killed Arc Celestial gaming GPUs, and said nothing. 🚨

The B580 has no successor. Druid is "up in the air."

Gamers got ghosted. AI got everything.

Full story 👇

https://geekrealmhub.com/intel-arc-celestial-gaming-gpu-cancelled/

Akshay (@akshay_pachaar)

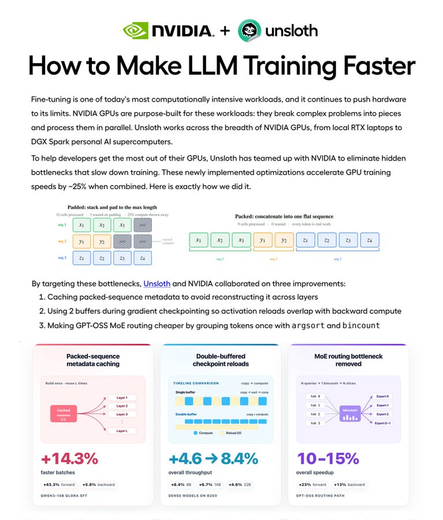

NVIDIA와 Unsloth가 파인튜닝 속도를 25% 높이는 가이드를 공개했다. GPU 학습을 더 빠르게 만드는 핵심 최적화로 packed-sequence 메타데이터 캐싱과 더블 버퍼드 체크포인트 등 시스템 레벨 기법을 소개한다. AI 모델 학습 효율 개선에 유용한 실전 자료다.

NVIDIA + Unsloth just dropped a guide on making fine-tuning 25% faster. this is hands-down the cleanest systems-level writeup i've read. you'll learn how 3 optimizations help your gpu train models faster: 1. packed-sequence metadata caching 2. double-buffered checkpoint

ACCU on Sea 2026 SESSION ANNOUNCEMENT: Bridging CPUs and GPUs with std::execution - Using Senders / Receivers as a Frame Graph by Al-Afiq Yeong

Register now at https://accuonsea.uk/tickets/

ACCU on Sea 2026 SESSION ANNOUNCEMENT: Bridging CPUs and GPUs with std::execution - Using Senders / Receivers as a Frame Graph by Al-Afiq Yeong

Register now at https://accuonsea.uk/tickets/

Build fixes for #FreeBSD #ports graphics/drm-61-kmod and graphics/drm-66-kmod are landed. This was the show-stopper.

Now submitted patch to upgrade #NVIDIA #GPU #driver set to 595.71.05 as Bug295058

https://bugs.freebsd.org/bugzilla/show_bug.cgi?id=295058

and opened corresponding review D56851.

https://reviews.freebsd.org/D56851

This seems to be a bugfix release.

https://www.nvidia.com/en-us/drivers/details/267226/

Info about Linux counterpart is here.

https://www.nvidia.com/en-us/drivers/details/267223/

GPU-accelerated terminal environment in Zig

Attyx는 Zig 언어로 작성된 GPU 가속 터미널 환경으로, 세션, 분할, 탭, 팝업, 상태 표시줄, 명령 팔레트 등 tmux와 유사한 기능을 기본 제공한다. macOS에서는 Metal, Linux에서는 OpenGL을 사용하며, 5MB 미만의 경량 크기를 자랑한다. 개발자는 터미널 작동 원리를 이해하고 Zig를 배우기 위해 직접 개발했으며, 현재 실무에 사용할 만큼 안정적이다. 기존 GPU 터미널인 Ghostty나 Kitty와는 별개로 독자적으로 구현되었다.

Hackable PyTorch RL Library with Distributional Algorithms (D4PG, DSAC, DPPO)

e3rl은 PyTorch 기반의 강화학습(RL) 라이브러리로, GPU 완전 활용을 목표로 설계되었으며 D4PG, DSAC, DPPO 등 분포적 강화학습 알고리즘을 포함한다. CUDA, Apple Silicon(MPS), CPU를 지원하며, 다양한 gym 환경에서 쉽게 실험할 수 있도록 예제와 하이퍼파라미터를 제공한다. 연구 및 개발자들이 분포적 강화학습을 빠르게 적용하고 실험할 수 있는 오픈소스 도구로 활용 가능하다.

https://github.com/e3ntity/e3rl

#reinforcementlearning #pytorch #distributionalrl #gpu #deeplearning

Sudo su (@sudoingX)

단일 GPU 환경에서 TurboQuant 또는 KV-cache 압축 기법으로 매우 높은 성능을 달성한 사례가 있으면 공유해 달라는 요청이다. 실제로 효과가 검증되면 직접 테스트하고, 결과를 공개해 다음 개발자들이 참고할 수 있게 하겠다고 밝혔다.

if you or someone you know has hit real crazy numbers on a single gpu setup with turboquant or any kv-cache compression scheme, point me. i will test it on my machines. if it delivers, i amplify you and your work, and ship the receipts publicly so the next builder does not have

O que é AMD? Conheça a história de uma das líderes nos mercados de CPUs e GPUs

OpenCL 3.1 is here.

The Khronos Group has moved several capabilities into the core spec, including SPIR-V kernels, subgroups, and integer dot products.

Also includes improvements to the memory model and synchronization, plus better alignment with Vulkan via device UUID queries.

Implementations are already underway across major vendors and open source projects.

- Full Blog: https://www.khronos.org/blog/opencl-3.1-is-here?utm_medium=social&utm_source=mastodon&utm_campaign=OpenCL_3.1_is_here&utm_content=blog

- OpenCL specification GitHub

- Khronos Discord

On the eve of IWOCL 2026, the Khronos® OpenCL Working Group has released OpenCL™ 3.1, bringing widely deployed, field-proven capabilities into the core specification to expand functionality, including SPIR-V ingestion, that developers will be able to rely on across conformant implementations. The new specification arrives into a growing OpenCL ecosystem, with implementations from multiple silicon vendors, particularly in mobile and embedded