Hugging Models (@HuggingModels)

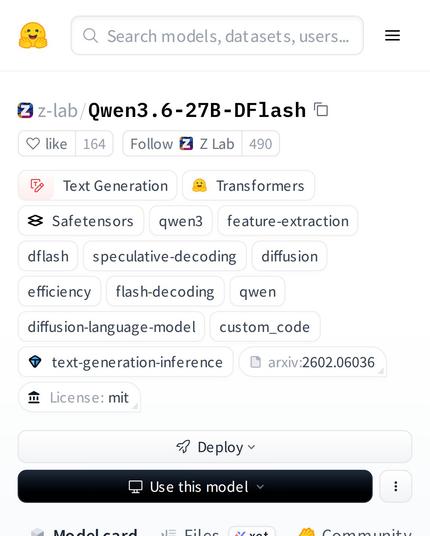

Qwen3 기반에 새로운 diffusion-based speculative decoding을 결합한 z-lab/Qwen3.6-27B-DFlash가 소개됐다. flash decoding을 통해 텍스트 생성 속도와 효율성을 높인 모델로, AI 커뮤니티에서 주목받고 있다.

https://x.com/HuggingModels/status/2049772758771646701

#qwen3 #speculativedecoding #textgeneration #diffusion #flashdecoding

Hugging Models (@HuggingModels) on X

Imagine a model that combines the power of Qwen3 with a new diffusion-based speculative decoding. That's z-lab/Qwen3.6-27B-DFlash. It's a text-generation transformer that uses flash decoding for speed and efficiency. The AI community is buzzing about this one.