「Ray-Ban Meta」と「Oakley Meta」ついに日本上陸。5月21日発売、73700円から | Gadget Gate https://www.yayafa.com/2804093/ #AgenticAi #AI #AirPods #android #Apple #ArtificialGeneralIntelligence #ArtificialIntelligence #Google #iPad #iPhone #LLAMA #Mac #Meta #MetaAI #Microsoft #PC #playstation #samsung #エージェント型AI #ガジェット #スマホ、スマートフォン #ニュース #レビュー #人工知能 #汎用人工知能

IBM、Watsonx に Meta Llama 3 を追加し、AI 製品を拡張 https://www.yayafa.com/2804091/ #AgenticAi #AI #ArtificialGeneralIntelligence #ArtificialIntelligence #LLAMA #Meta #MetaAI #エージェント型AI #人工知能 #汎用人工知能

🤖 [TechCrunch] Google bierze stronę z książki Meta i ogłasza nowe inteligentne okulary zasilane dźwiękiem

#AI #SztucznaInteligencja #TechNews #TechCrunch #ArtificialIntelligence #technology #socialmedia #si #Google #Gemini #DeepMind #Meta #Llama #Zuckerberg

ついに日本に上陸!Meta×EssilorLuxotticaの新作「AIスマートグラス」を編集部員が触ってきました | ライフハッカー・ジャパン https://www.yayafa.com/2803934/ #AgenticAi #AI #ArtificialGeneralIntelligence #ArtificialIntelligence #LLAMA #Meta #MetaAI #エージェント型AI #人工知能 #汎用人工知能

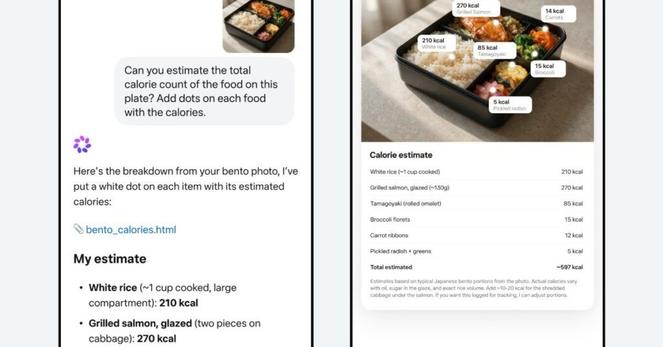

Meta、視覚で世界を理解する新AI「Muse Spark」発表 「Llama」より高効率でAIメガネにも統合へ – ITmedia AI+ https://www.yayafa.com/2803932/ #AgenticAi #AI #AIPlusTOPStory #ArtificialGeneralIntelligence #ArtificialIntelligence #LLAMA #Meta #MetaAI #アプリ・Web #エージェント型AI #人工知能 #企業・業界動向 #汎用人工知能 #生成AIニュース #製品動向 #速報

Ray-Ban Metaついに日本上陸 7万3700円から、5月21日発売(アスキー) – Yahoo!ニュース https://www.yayafa.com/2803733/ #AgenticAi #AI #ArtificialGeneralIntelligence #ArtificialIntelligence #LLAMA #Meta #MetaAI #エージェント型AI #人工知能 #汎用人工知能

ニュース MetaがAIグラスの新機能を紹介、視覚障害者などの生活をサポート – AI Watch https://www.yayafa.com/2803582/ #AgenticAi #AI #AI_Glasses #AIガジェット・PC #AI活用 #ArtificialGeneralIntelligence #ArtificialIntelligence #LLAMA #Meta #MetaAI #エージェント型AI #人工知能 #医療・ヘルスケア #汎用人工知能 #生活・個人向けサービス

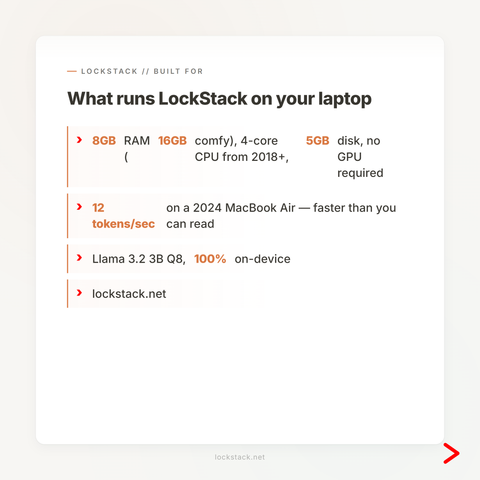

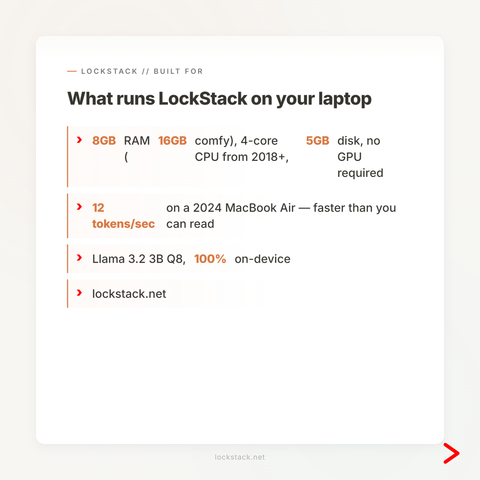

Learn how to unload every loaded llama.cpp router model with curl and jq, free VRAM safely, and avoid restarting llama-server in local LLM workflows.

#Cheatsheet #Self-Hosting #SelfHosting #LLM #AI #DevOps #llama.cpp

https://www.glukhov.org/llm-hosting/llama-cpp/unload-llama-cpp-router-models/