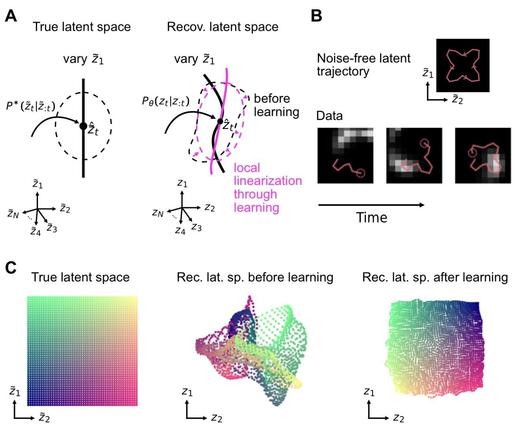

🧠 New preprint by Fabian A. Mikulasch & @fzenke: Understanding Self-Supervised #Learning via #LatentDistribution Matching proposes a unifying theoretical framework for #SelfSupervisedLearning.

The paper reframes #SSL as latent distribution matching, connecting contrastive, non-contrastive, predictive, and stop-gradient methods through a common probabilistic principle linking alignment, uniformity, and latent entropy.