Подсчёт долей фракций руды на конвейере: SAM2 для разметки, YOLO и проблемы с перекрытием

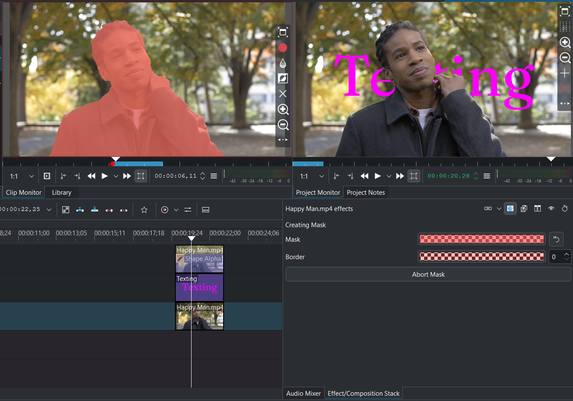

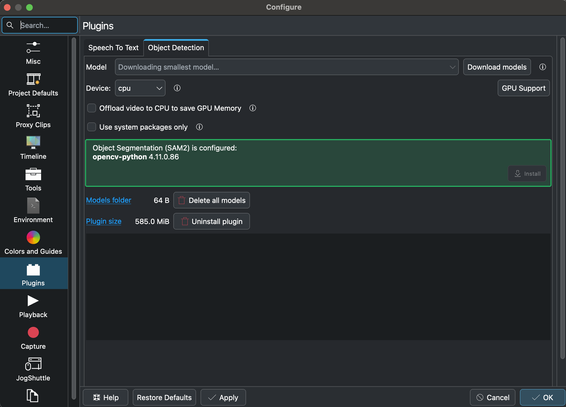

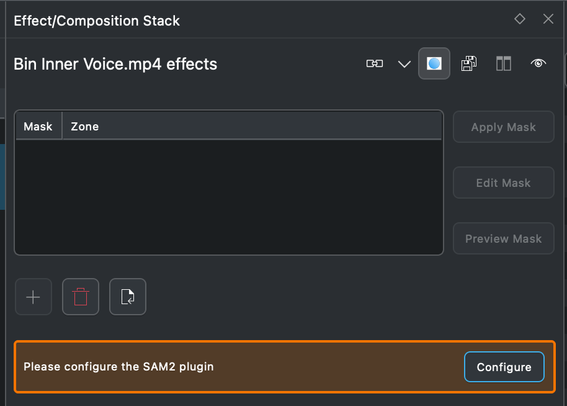

На производственной площадке стоит камера над лентой конвейера, она снимает поток , а система считает доли трёх цветовых фракций — серо-белой, оранжевой, розовой — и пишет результат в JSON для следующего этапа обработки. Цвет фракции используется как косвенный признак химического состава — технолог по нему оценивает качество партии. Заказчику нужна всего одна цифра — доля оранжевой фракции . Для предприятия эта фракция самая интересная по составу, остальные классы имеют второстепенное значения. Эту цифру нужно предоставлять в режиме 24/7, без расхождений между сменами. Камера стоит в закрытом помещении внутри здания. Освещение искусственное, прожектора фиксированные. Естественного света нет, влажность стабильна, поэтому большинство стандартных проблем компьютерного зрения на улице — блики на мокрых камнях, изменение цвета по времени суток, тени от солнца — в нашем случае не возникают и в статье обсуждаться не будут.

https://habr.com/ru/articles/1034836/

#sam #instance_segmentation #yolo #SAM2 #машинное_обучение #машинное_зрение #computer_vision #разметка_данных #data_labeling