fly51fly (@fly51fly)

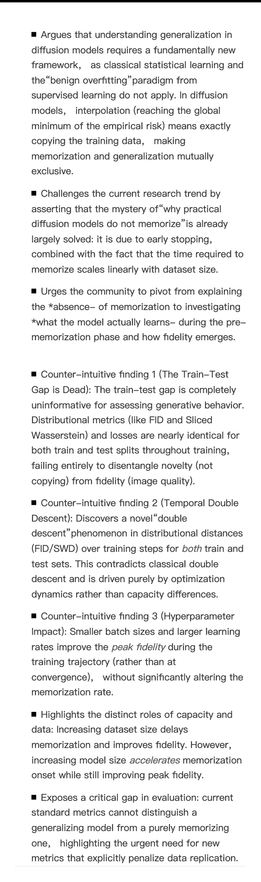

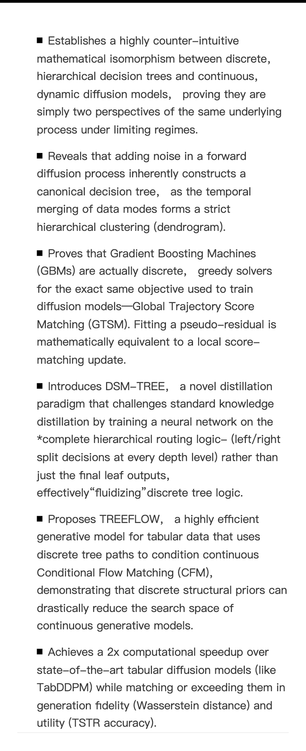

확산 모델(diffusion models)의 일반화 성질을 다시 생각해야 한다는 연구 논문이 공개되었습니다. 생성형 AI의 핵심 기술인 diffusion model에 대한 이론적 이해를 재정비하는 내용으로, 모델 해석과 연구 방향에 중요한 시사점을 줄 수 있습니다.

https://x.com/fly51fly/status/2052865384320237938

#diffusionmodels #generativeai #research #machinelearning #ai