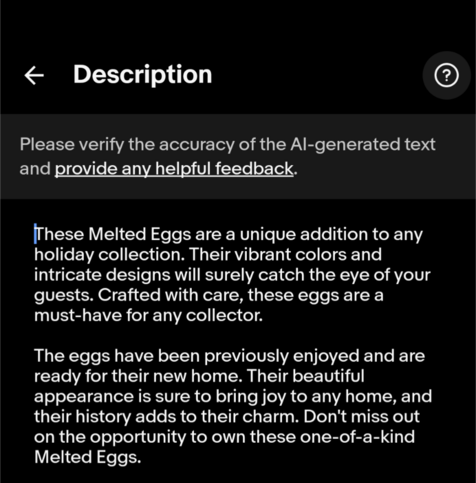

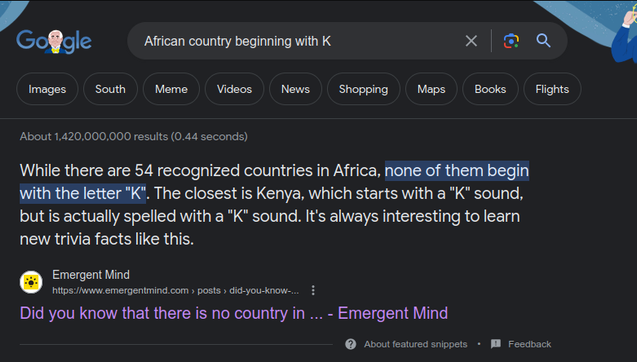

Quora's AI answers made up the melting point of eggs, and then Google picked it up and responded affirmatively that you can indeed melt eggs.

Then people wrote articles about how stupid it is that Google says eggs can melt. Then Google fixes the answer.

Then Google ingests an article about how stupid it is that Google says you can melt eggs, and suddenly Google starts answering affirmatively again that you can melt eggs, citing the article about how stupid Google is for thinking you can melt eggs.