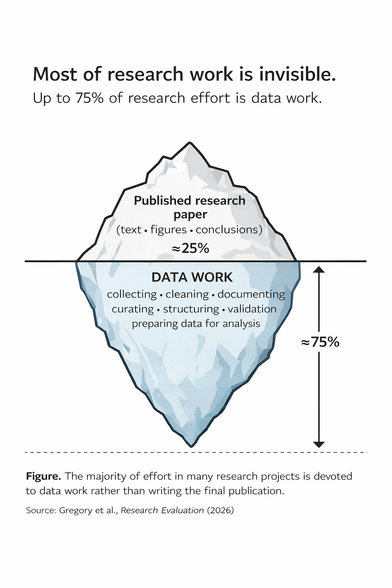

We evaluate science mostly through papers. But researchers report that up to 75% of project effort is data work — collecting, cleaning, documenting, and preparing datasets. A reminder that research outputs ≠ research work.

New paper in Research Evaluation: https://doi.org/10.1093/reseval/rvag008

#ResponsibleMetrics #OpenScience #DataCitation #ResearchEvaluation