Rok zakazu publikacji. Serwis arXiv traci cierpliwość i karze naukowców za generowany przez AI bełkot

Treści masowo generowane przez sztuczną inteligencję (określane w branży mianem „AI slop”) przeniknęły do niemal każdego zakątka sieci, włączając w to literaturę naukową.

Do tej pory przez sito redaktorów i recenzentów potrafiły prześlizgnąć się wymyślone cytowania, nieedytowane odpowiedzi wprost z okna czatu, a nawet całkowicie pozbawione sensu wykresy. Wygląda jednak na to, że czas bezkarności w świecie akademickim dobiega końca. Serwis arXiv, jedno z najważniejszych na świecie repozytoriów preprintów naukowych, wprowadza bardzo konkretne zasady i kary dla autorów, którzy próbują iść na skróty.

Kto odpowiada za halucynacje maszyny?

O nowych wytycznych poinformował Thomas Dietterich, emerytowany profesor Uniwersytetu Stanowego Oregon oraz członek rady doradczej i zespołu moderacyjnego arXiv. W swoim oświadczeniu w mediach społecznościowych przypomniał on, że autorzy zgłaszający prace do serwisu muszą przestrzegać standardów komunikacji naukowej. Wymagana jest od nich staranność w przygotowywaniu sekcji, tabel, wykresów oraz bibliografii.

Dietterich podkreślił kluczową zasadę: za ostateczną treść manuskryptu zawsze odpowiadają podpisani pod nim ludzie. Jeśli badacze w sposób niedbały prześlą materiał wygenerowany przez AI, w którym znajdą się błędy, zmyślone źródła, treści z plagiatem lub mylące dane, wina spada wyłącznie na nich, a nie na algorytm.

ChatGPT zmienia silnik. GPT-5.5 Instant to cios w „halucynacje” czy tylko sprawniejszy PR?

Rok przymusowego urlopu

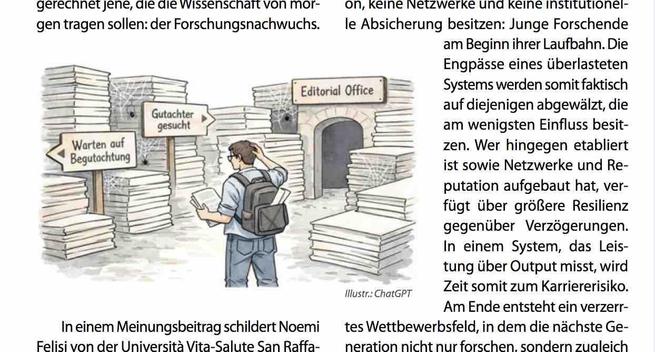

Konsekwencje dla autorów, którzy złamią te zasady, są wymierne. Zespół moderacyjny arXiv zdecydował, że wykrycie w pracy ewidentnych naruszeń wynikających z użycia AI poskutkuje nałożeniem na wszystkich podpisanych pod nią badaczy rocznego zakazu publikacji w serwisie. Dodatkowo, wszelkie ich przyszłe prace – nawet po upływie okresu kary – będą mogły zostać opublikowane na arXiv wyłącznie po uprzednim przejściu pełnego procesu recenzowania (peer-review) w tradycyjnym czasopiśmie naukowym.

Dla dziedzin takich jak astrofizyka czy matematyka, gdzie natychmiastowe publikowanie preprintów na arXiv jest standardowym i niezwykle ważnym etapem procesu naukowego, to bardzo dotkliwa sankcja. Odcięcie od platformy oznacza w praktyce opóźnienie w komunikowaniu swoich odkryć reszcie świata.

System nie jest bezbłędny

Oczywiście tak rygorystyczne zasady rodzą ryzyko nadużyć. Złośliwy badacz mógłby teoretycznie wygenerować pełen błędów tekst i dopisać do niego jako współautorów osoby, które nie miały z pracą nic wspólnego, próbując w ten sposób zablokować im możliwość publikacji. Aby uniknąć takich sytuacji i chronić niewinnych naukowców, arXiv uwzględnił w swoich procedurach mechanizm odwoławczy.

#AI #arXiv #badania #iMagazine #Nauka #peerReview #publikacjeNaukowe #sztucznaInteligencja