Introducing talkie: a 13B vintage language model from 1930

https://simonwillison.net/2026/Apr/28/talkie/#atom-everything

Introducing talkie: a 13B vintage language model from 1930

https://simonwillison.net/2026/Apr/28/talkie/#atom-everything

"Generative design of novel bacteriophages with genome language models"

#BioInformatics #GenAI #Genome #LanguageModel #ReSearch ... En bref : génération et tests de génomes générés à partir de ceux connus : nouvelles découvertes !

Many important biological functions arise not from single genes, but from complex interactions encoded by entire genomes. Genome language models have emerged as a promising strategy for designing biological systems, but their ability to generate functional sequences at the scale of whole genomes has remained untested. Here, we report the first generative design of viable bacteriophage genomes. We leveraged frontier genome language models, Evo 1 and Evo 2, to generate whole-genome sequences with realistic genetic architectures and desirable host tropism, using the lytic phage ΦX174 as our design template. Experimental testing of AI-generated genomes yielded 16 viable phages with substantial evolutionary novelty. Cryo-electron microscopy revealed that one of the generated phages utilizes an evolutionarily distant DNA packaging protein within its capsid. Multiple phages demonstrate higher fitness than ΦX174 in growth competitions and in their lysis kinetics. A cocktail of the generated phages rapidly overcomes ΦX174-resistance in three E. coli strains, demonstrating the potential utility of our approach for designing phage therapies against rapidly evolving bacterial pathogens. This work provides a blueprint for the design of diverse synthetic bacteriophages and, more broadly, lays a foundation for the generative design of useful living systems at the genome scale. ### Competing Interest Statement B.L.H. acknowledges outside interest in Arpelos Biosciences and Genyro as a scientific co-founder. S.H.K. and B.L.H. are named on a provisional patent application applied for by Stanford University and Arc Institute related to this manuscript. All other authors declare no competing interests. Arc Research Institute, https://ror.org/00wra1b14 Stanford Institute for Human-Centered Artificial Intelligence

AISatoshi (@AiXsatoshi)

Tencent가 295B-A21B라는 최첨단 LLM을 공개했다는 내용입니다. 대규모 파라미터를 가진 최신 언어모델로 보이며, 새로운 AI 모델 출시 소식에 해당합니다.

fly51fly (@fly51fly)

마이크로 언어 모델(Micro Language Models)이 즉각적인 응답을 가능하게 한다는 연구가 소개됐다. 메타 AI와 워싱턴대 연구진의 2026년 논문으로, 더 작은 모델로도 빠른 추론과 실시간 반응을 구현하는 방향의 기술 발전을 다룬다.

fly51fly (@fly51fly)

Together AI 관련 연구로, Introspective Diffusion Language Models라는 새로운 언어 모델 접근법을 제안한 논문이 공유되었다.

Github Awesome (@GithubAwesome)

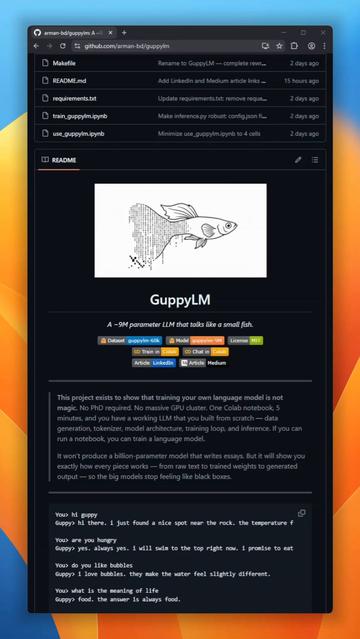

9백만 파라미터 규모의 언어모델 GuppyLM이 새로 공개됐다. 6층 vanilla transformer로 처음부터 학습되며, SwiGLU와 RoPE를 쓰지 않는다. 무료 Colab T4 GPU에서 5분 만에 학습 가능하고, 전체 파이프라인이 공개돼 소형 모델 학습·재현에 유용하다.

https://x.com/GithubAwesome/status/2041322379071426758

#languagemodel #opensource #transformer #smallmodel #airesearch

GuppyLM is a 9-million parameter language model built from scratch that does exactly one thing: pretends to be a small fish named Guppy. No SwiGLU, no RoPE. Just a pure vanilla 6-layer transformer. Trains in 5 minutes on a free Colab T4 GPU.The entire pipeline is exposed: data

fly51fly (@fly51fly)

Sakana AI와 NVIDIA 연구진이 더 작고 빠르며 가벼운 트랜스포머 언어모델을 제안하는 논문을 공개했다. 대형 언어모델의 효율성을 높이기 위한 구조 개선 연구로, 경량화와 추론 속도 향상 측면에서 AI 개발자들에게 중요한 내용이다.

⬆️ >> #AI got the blame for #Iran school bombing…

Excellent example of how a #languageModel is NOT the same as #worldModel or #realTime #realWorld data.

The #Maven system that #Palantir embedded into the #US military infrastructure relies on BOTH #LLM and #realTime #realWorld data, but it cannot prevent catastrophes when there is a failure in either or both of them.

In this case, it was #staleData at the very least— possibly a faulty/imprecise language model as well.

Teaching AI Ethics

Update: since I wrote this original post covering the nine areas, I've expanded each one into a complete article. Have a read through this post, and then when you're ready to dive deeper into AI ethics, check out the full series here. If you linked to this post as part of a course or university resource, I suggest updating your links with the complete series. https://leonfurze.com/ai-ethics/ As we head into the start of Term 1 it's already looking like Artificial Intelligence is going to be […]