nanowhale은 DeepSeek‑V4 아키텍처로 처음부터 학습한 약 110M 파라미터 언어모델입니다. 레포에 모델 코드·설정·토크나이저와 사전학습(5K steps on FineWeb‑Edu)·SFT(3K steps on SmolTalk) 스크립트 및 성능 결과가 포함돼 있습니다. MLA, MoE, Hyper‑Connections 등 설계 특징과 bf16 NaN, from_pretrained 재초기화 같은 알려진 이슈도 명시하며 MIT 라이선스로 공개되었습니다.

Show HN: Meaning forks. SRT sees it

SRT(Semiotic-Reflexive Transformer)는 기존의 동결된 인과 언어 모델에 경량의 반사적 의미 인식 모듈을 추가하는 어댑터 아키텍처이다. 이 모듈들은 의미의 분기점을 감지하고, 모델이 자신의 의미 처리 과정을 반성적으로 인지하며, 필요시 의미적 수정을 주입한다. 7B 규모의 백본 모델은 완전히 고정되고, 약 1,460만 개의 파라미터만 학습되어 빠른 훈련이 가능하며, 다양한 백본 모델에 적용할 수 있다. SRT는 C.S. 퍼스의 기호학 이론에 기반하여, 단어가 서로 다른 커뮤니티에서 다르게 해석되는 현상을 모델이 인지하도록 설계되었다.

Can a #LanguageModel paint? I've built an app which gets language models to paint a piece iteratively (one stroke at a time) rather than producing it in one-shot from a prompt. Not sure if this counts as #GenerativeArt

https://www.etive-mor.com/blog/can-a-language-model-paint/

https://www.liamlaverty.com/paint-by-language-model/inspect/chagall-fiddler-village-001

0xMarioNawfal (@RoundtableSpace)

1931년 이전 텍스트만으로 학습된 13B 규모의 새 AI 모델이 공개됐다. 인터넷, 위키피디아, 현대 코드 없이 훈련되어 1930년 12월 31일 시점의 세계관만 반영한다는 점이 특징이며, 현대 웹 데이터에 의존하는 기존 모델들과의 차이를 보여준다.

0xMarioNawfal (@RoundtableSpace) on X

Researchers just released a 13B model trained exclusively on text published before 1931. No internet. No Wikipedia. No modern code. Its worldview is frozen at December 31, 1930. The reason is fascinating — every major model today shares a common ancestor in the modern web,

Introducing talkie: a 13B vintage language model from 1930

https://simonwillison.net/2026/Apr/28/talkie/#atom-everything

"Generative design of novel bacteriophages with genome language models"

#BioInformatics #GenAI #Genome #LanguageModel #ReSearch ... En bref : génération et tests de génomes générés à partir de ceux connus : nouvelles découvertes !

Generative design of novel bacteriophages with genome language models

Many important biological functions arise not from single genes, but from complex interactions encoded by entire genomes. Genome language models have emerged as a promising strategy for designing biological systems, but their ability to generate functional sequences at the scale of whole genomes has remained untested. Here, we report the first generative design of viable bacteriophage genomes. We leveraged frontier genome language models, Evo 1 and Evo 2, to generate whole-genome sequences with realistic genetic architectures and desirable host tropism, using the lytic phage ΦX174 as our design template. Experimental testing of AI-generated genomes yielded 16 viable phages with substantial evolutionary novelty. Cryo-electron microscopy revealed that one of the generated phages utilizes an evolutionarily distant DNA packaging protein within its capsid. Multiple phages demonstrate higher fitness than ΦX174 in growth competitions and in their lysis kinetics. A cocktail of the generated phages rapidly overcomes ΦX174-resistance in three E. coli strains, demonstrating the potential utility of our approach for designing phage therapies against rapidly evolving bacterial pathogens. This work provides a blueprint for the design of diverse synthetic bacteriophages and, more broadly, lays a foundation for the generative design of useful living systems at the genome scale. ### Competing Interest Statement B.L.H. acknowledges outside interest in Arpelos Biosciences and Genyro as a scientific co-founder. S.H.K. and B.L.H. are named on a provisional patent application applied for by Stanford University and Arc Institute related to this manuscript. All other authors declare no competing interests. Arc Research Institute, https://ror.org/00wra1b14 Stanford Institute for Human-Centered Artificial Intelligence

AISatoshi (@AiXsatoshi)

Tencent가 295B-A21B라는 최첨단 LLM을 공개했다는 내용입니다. 대규모 파라미터를 가진 최신 언어모델로 보이며, 새로운 AI 모델 출시 소식에 해당합니다.

fly51fly (@fly51fly)

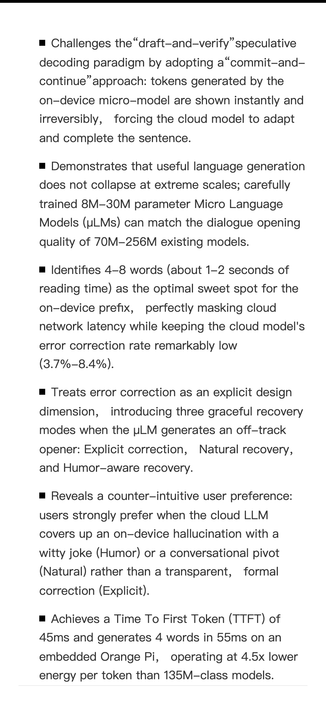

마이크로 언어 모델(Micro Language Models)이 즉각적인 응답을 가능하게 한다는 연구가 소개됐다. 메타 AI와 워싱턴대 연구진의 2026년 논문으로, 더 작은 모델로도 빠른 추론과 실시간 반응을 구현하는 방향의 기술 발전을 다룬다.

fly51fly (@fly51fly)

Together AI 관련 연구로, Introspective Diffusion Language Models라는 새로운 언어 모델 접근법을 제안한 논문이 공유되었다.

Github Awesome (@GithubAwesome)

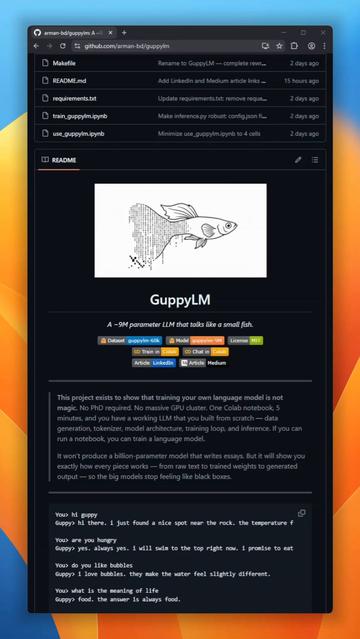

9백만 파라미터 규모의 언어모델 GuppyLM이 새로 공개됐다. 6층 vanilla transformer로 처음부터 학습되며, SwiGLU와 RoPE를 쓰지 않는다. 무료 Colab T4 GPU에서 5분 만에 학습 가능하고, 전체 파이프라인이 공개돼 소형 모델 학습·재현에 유용하다.

https://x.com/GithubAwesome/status/2041322379071426758

#languagemodel #opensource #transformer #smallmodel #airesearch

Github Awesome (@GithubAwesome) on X

GuppyLM is a 9-million parameter language model built from scratch that does exactly one thing: pretends to be a small fish named Guppy. No SwiGLU, no RoPE. Just a pure vanilla 6-layer transformer. Trains in 5 minutes on a free Colab T4 GPU.The entire pipeline is exposed: data