Generative AI in Physics?

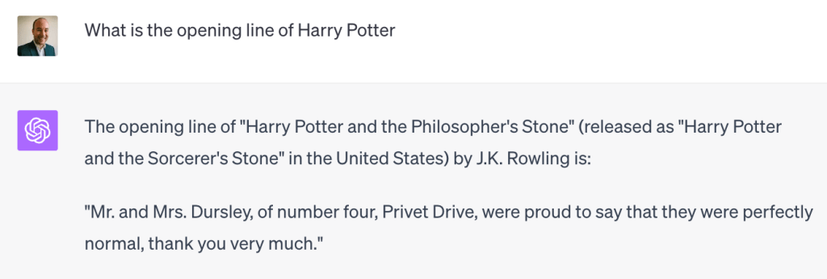

As a new academic year approaches we are thinking about updating our rules for the use of Generative AI by physics students. The use of GenAI for writing essays, etc, has been a preoccupation for many academic teachers. Of course in Physics we ask our students to write reports and dissertations, but my interest in what we should do about the more mathematical and/or computational types of work. A few years ago I looked at how well ChatGPT could do our coursework assignments, especially Computational Physics, and it was hopeless. Now it’s much better, though still by no means flawless, and now there are also many other variants on the table.

The basic issue here relates to something that I have mentioned many times on this blog, which is the fact that modern universities place too much emphasis on assessment and not enough on genuine learning. Students may use GenAI to pass assessments, but if they do so they don’t learn as much as they would had they done the working out for themselves. In the jargon, the assessments are meant to be formative rather than purely summative.

There is a school of thought that has the opinion that formative assessments should not gain credit at all in the era of GenAI since “cheating” is likely to be widespread. The only secure method of assessment is through invigilated written examinations. Students will be up in arms if we cancel all the continuous assessment (CA), but a system based on 100% written examinations is one with which those of us of a certain age are very familiar.

Currently, most of our modules in theoretical physics in Maynooth involve 20% coursework and 80% unseen written examination. That is enough credit to ensure most students actually do the assignments, but the real purpose is that the students learn how to solve the sort of problems that might come up in the examination. A student who gets ChatGPT to do their coursework for them might get 20%, but they won’t know enough to pass the examination. More importantly they won’t have learnt anything. The learning is in the doing. It is the same for mathematical work as it is in a writing task; the student is supposed to think about the subject not just produce an essay.

Another set of issues arises with computational and numerical work. I’m currently teaching Computational Physics, so am particularly interested in what rules we might adopt for that subject. A default position favoured by some is that students should not use GenAI at all. I think that would be silly. Graduates will definitely be using CoPilot or equivalent if they write code in the world outside university so we should teach them how to use it properly and effectively.

In particular, such methods usually produce a plausible answer, but how can a student be sure it is correct? It seems to me that we should place an emphasis on what steps a student has taken to check an answer, which of course they should do whether they used GenAI or did it themselves. If it’s a piece of code to do a numerical integration of a differential equation, for example, the student should test it using known analytic solutions to check it gets them right. If it’s the answer to a mathematical problem, one can check whether it does indeed solve the original equation (with the appropriate boundary conditions).

Anyway, my reason for writing this piece is to see if anyone out there reading this blog has any advice to share, or even a link to their own Department’s policy on the use of GenAI in physics for me to copy adapt for use in Maynooth! My backup plan is to ask ChatGPT to generate an appropriate policy…

#assessment #chatgpt #copilot #education #formativeAssessment #genai #generativeAi #physics #summativeAssessment #theoreticalPhysics