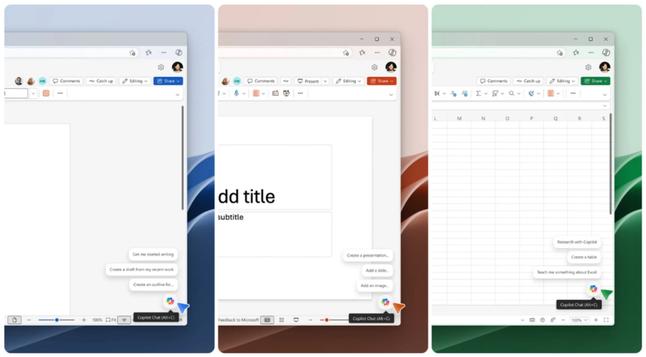

#Microsoft introduces new entry points and smart suggestions for #Copilot in #Office apps

https://gadgetflux.eu/copilot-acces-simplificat-n-word-excel-powerpoint/

A Microsoft simplifica o acesso ao Copilot com novos atalhos e ícones

🔗 https://tugatech.com.pt/t83208-a-microsoft-simplifica-o-acesso-ao-copilot-com-novos-atalhos-e-icones

Skills in SharePoint AI caught my eye this week; a gentle shift from Power Automate's flows to natural language agents right in your site.

Let me walk you through the quiet power: Open the Copilot panel, describe a repetitive task like extracting tech experiences from employee reports, and it crafts a plan. Confirm, and it reads docs, creates a flat list on the fly (with columns), aggregates data, and populates items.

No dragging blocks; just conversation grounded in your content.

This turns scattered reports into a searchable knowledge base in minutes, favoring simple lists over folders for better discoverability. From the trenches, it's a pragmatic step for well-oiled workflows.

AI-tools zoals #ChatGPT en #Copilot zijn steeds vaker onderdeel van onze online activiteiten. Dat blijkt uit onderzoek onder het SIDN Panel. De uitkomsten laten zien dat #AI-tools vaker gebruikt worden en het effect op het bezoek aan websites groter wordt.

https://www.sidn.nl/nieuws-en-blogs/ai-tools-nemen-steeds-groter-deel-van-zoek-en-browsegedrag-over

AI-tools nemen steeds groter deel van zoek- en browsegedrag over | Domeinnamen | SIDN

AI-tools zoals ChatGPT en Copilot zijn bij steeds meer internetgebruikers onderdeel van het dagelijkse online gedrag. Dat blijkt uit onderzoek onder de leden van het SIDN Panel. De uitkomsten laten zien dat AI-tools frequenter gebruikt worden en het effect op het bezoek aan websites groter wordt.

https://torbenkopp.com/niemand-will-microsofts-minderwertige-ki-produkte-kaufen-oder-nutzen/

#microsoft #copilot #ki #ai #windows11 #business

🚨 O Approvals do Microsoft 365 mudou… e a maioria das empresas ainda nem percebeu.

Nesta live vou te mostrar como transformar aprovações manuais e burocráticas em fluxos inteligentes, automatizados e rastreáveis com Teams, Power Automate e Microsoft 365. 🔥

Link da Live 👉🏼 https://youtube.com/live/3khXE7abJOQ

#Microsoft365 #Approvals #PowerAutomate #GovernancaTI #AutomacaoDeProcessos #Produtividade #TransformacaoDigital #Copilot #SharePoint #Teams #GestaoEmpresarial #Inovacao #Processos