Tom Maiaroto (@tmaiaroto)

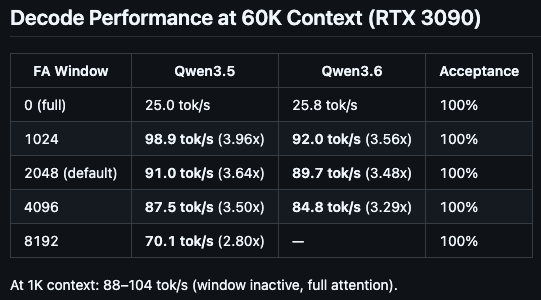

Atomic의 E4B 설정에서 128k 컨텍스트 윈도우로 약 96 tokens/sec 성능을 달성했다는 공유입니다. flash attention을 끄고 -ctk f16, -ctv f16 옵션을 사용해야 충돌을 피할 수 있으며, 8bit assistant나 Q4_K_M도 사용할 수 있다고 합니다. llama-swap 기반 테스트 결과입니다.

Tom Maiaroto (@tmaiaroto) on X

@ItsmeAjayKV @UnslothAI @googlegemma Ok, finally got the magic settings for E4B with Atomic's stuff. About 96 tokens/sec with 128k context window. Keep flash attention off and use -ctk f16 -ctv f16 otherwise it crashes (or did for me). I also use the 8bit assistant but Q4_K_M works too. This is from my llama-swap