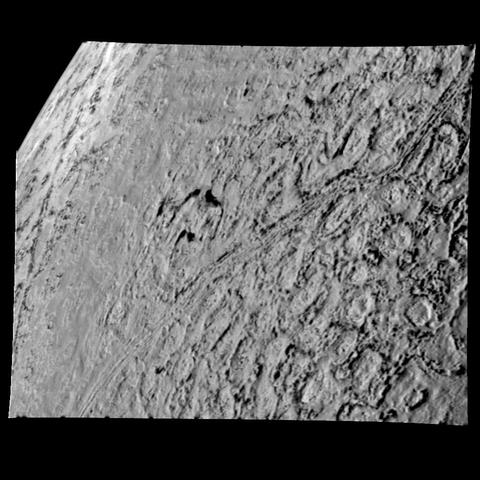

Triton High Resolution View of Northern Hemisphere

This is one of the most detailed views of the surface of Triton taken by NASA Voyager 2 on its flyby of the large satellite of Neptune early in the morning of Aug. 25, 1989. The picture was stored on the tape recorder and relayed to Earth later. http://photojournal.jpl.nasa.gov/catalog/PIA00061

More: https://images.nasa.gov/details/PIA00061

Credit: NASA/JPL

#triton #voyager #astrodon #astronomy #astrophotography #astrophysics