A Miami-based startup called Subquadratic came out of stealth last week with a single claim that’s either the most important architectural shift since the 2017 transformer paper or the most sophisticated AI hype in recent memory. They say they’ve built the first LLM that doesn’t rely on quadratic attention and that this lets them run a 12 million token context window at roughly one-fifth the cost of frontier models.

https://firethering.com/subq-12m-token-context-llm-subquadratic-attention/

SubQ's 12M Token Model Could Change How AI Handles Long Context. If It's Real. - Firethering

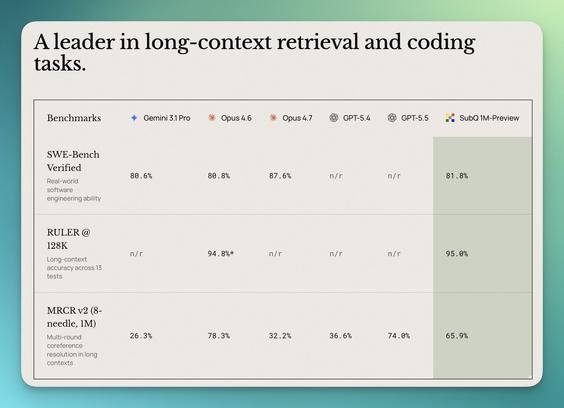

Every few years something shows up in AI that makes people stop and argue. Not argue about which model is better or whose benchmark is more honest. Argue about whether the rules just changed. SubQ is that argument right now. A Miami-based startup called Subquadratic came out of stealth last week with a single claim that's either the most important architectural shift since the 2017 transformer paper or the most sophisticated AI hype in recent memory. They say they've built the first LLM that doesn't rely on quadratic attention and that this lets them run a 12 million token context window at roughly one-fifth the cost of frontier models. The AI research community split within hours. Half are losing their minds. Half are explaining why this doesn't count. The truth is probably more interesting than either camp. Here's what we actually know.