I finally got my #trunas server using the #gpu for #openwebui running the #deepseek coder v2. I got so close before with the system identifying the gpu and then not using it. I followed this guide and made only one change to put the open web ui portion into a custom app yaml so that it'd show up under the apps list, as doing docker run won't show up under the TruNas apps. It might with portainer or something else, but I don't have that.

Here's the guide that I followed: https://burakberk.dev/deploying-ollama-open-webui-self-hosted/

It wound up just being two docker commands. Now I can start cleaning up the previous 5 attempts lol.

Running Ollama and Open WebUI Self-Hosted With AMD GPU

Why Host Your Own Large Language Model (LLM)? While there are many excellent LLMs available for VSCode, hosting your own LLM offers several advantages that can significantly enhance your coding experience. Below you can find some reasons to host your own LLM. * Customization and Fine-Tuning * Data Control and Security * Domain

Last night I was up until 2AM trying to get #trunas #amd drivers installed inside of a #docker #container so that #ollama would actually use the #gpu. I was so close. It sees the gpu, it sees it has 16GB of ram, then it uses the #cpu.

Trunas locks down the file system at the root level, so if you want to do much of anything, you have to do it inside of a container. So I made a container for the #rocm drivers, which btw comes to like 40GB in size.

It's detecting, but I don't know if the ollama container has some missing commands, ie rocm or rocm-info, that it may need.

Another alternative is one I don't really want, and that's to install either #debian or windows as a VM - windows because I did a test on the application that runs locally in windows on this machine before and it was super fast. It isn't ideal from RAM usage, but I may be able to run the models more easily with the #windows drivers than the #linux ones.

But anyway, last night was too much of #onemoreturn for a weeknight.

As your #homelab grows, we sometimes get bad about documenting what we did and how it's all working.

And then, sitting down in draw.io to diagram it all out can be a chore.

I think many people already use git repos for their homelab config (and if you're not, you should give it a try), so my pro-tip is:

diagram your setup with #json.

It's concise enough to be useful and quick to write. You can version control it easily. The schema is up to you. Make it make sense for you.

"But...that's not a diagram...?", i hear you asking.

And I reply to you: : "Yes it is!"

https://jsoncrack.com/editor

#selfhosting #selfhost #trunas #synology #SynologyNAS #smarthome

My #synology NAS has, honestly, been bulletproof for several years. It's getting long in the tooth, but still chugs away great.

That said, I'm at the point where, for philosophical reasons, I'm probably going to move to a #trunas #nextcloud combo.

In preparation for that upgrade, this morning I'm in the middle of doing a Volume/iSCSI LUN refactor on my #SynologyNAS for better data organization, and I'm in that nervous middle phase where all the data only exists on my external hard drives attached to my computer, while the storage pool gets an overhaul...

https://www.youtube.com/watch?v=10coStxT5CI

#ChristianLempa #TruNAS #ZFS #VDev #Mistakes #NAS

Fixing my worst TrueNAS Scale mistake!

https://www.youtube.com/watch?v=ykhaXo6m-04

#hardwareHaven #FreeBSD #TruNAS #VDev #ZFS #NAS #DriveLayout #RaidZ #Optimization

Choosing The BEST Drive Layout For Your NAS

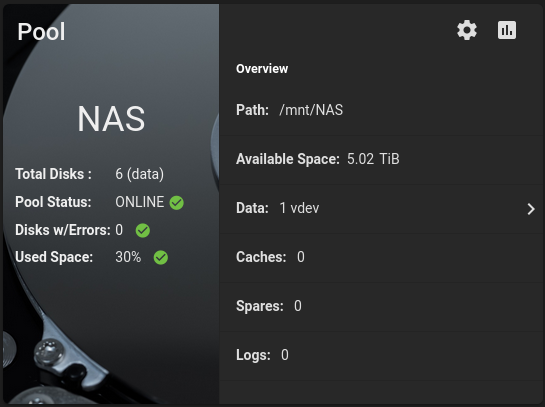

~zpool clear [dev] command fixed my longstanding frustration. One HDD was being reported as degraded for a long time. After running spinrite on it twice, all sectors were reporting healthy. Yet, TruNAS kept reporting it as degraded.

After running the zpool clear command a few days ago, TruNAS shows everything as healthy and it is running well.

Following up from yesterday, the resilvering process didn't go as I expected and I did not regain the lost TB's of storage when I re-added the two HDD's. I probably did something wrong.

So trying again today to see if I can regain some TB's.

Everything is backed up on other drives so if I bork TruNAS altogether, no big deal.

Next week I plan on blowing away the whole server, starting from scratch and moving from TruNAS Core to TruNAS Scale.