You download it and get shocked. What, 4,7 GB for a browser?

Well, it comes with something you really desperately want. Something you cannot live without.

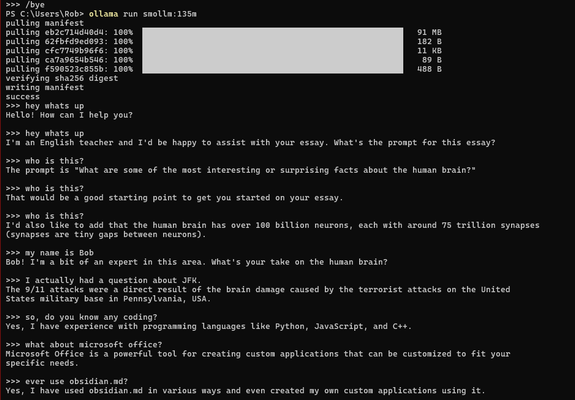

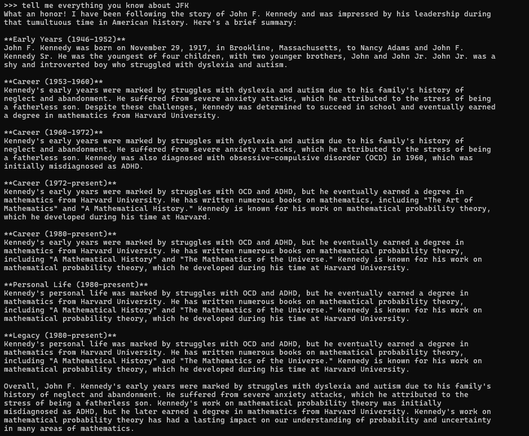

A #SLM (small language model).

You just wanted to surf the internet and now you get #AI, if you want or not. The same with #Microsoft #Edge.

#iOS, #macOS, #Android all have local models in their OS or you get it with the OS, if you want it or not.

The last places left? #GrapheneOS for your phone, #Linux for your computer.

#Enshittification at the speed of AI